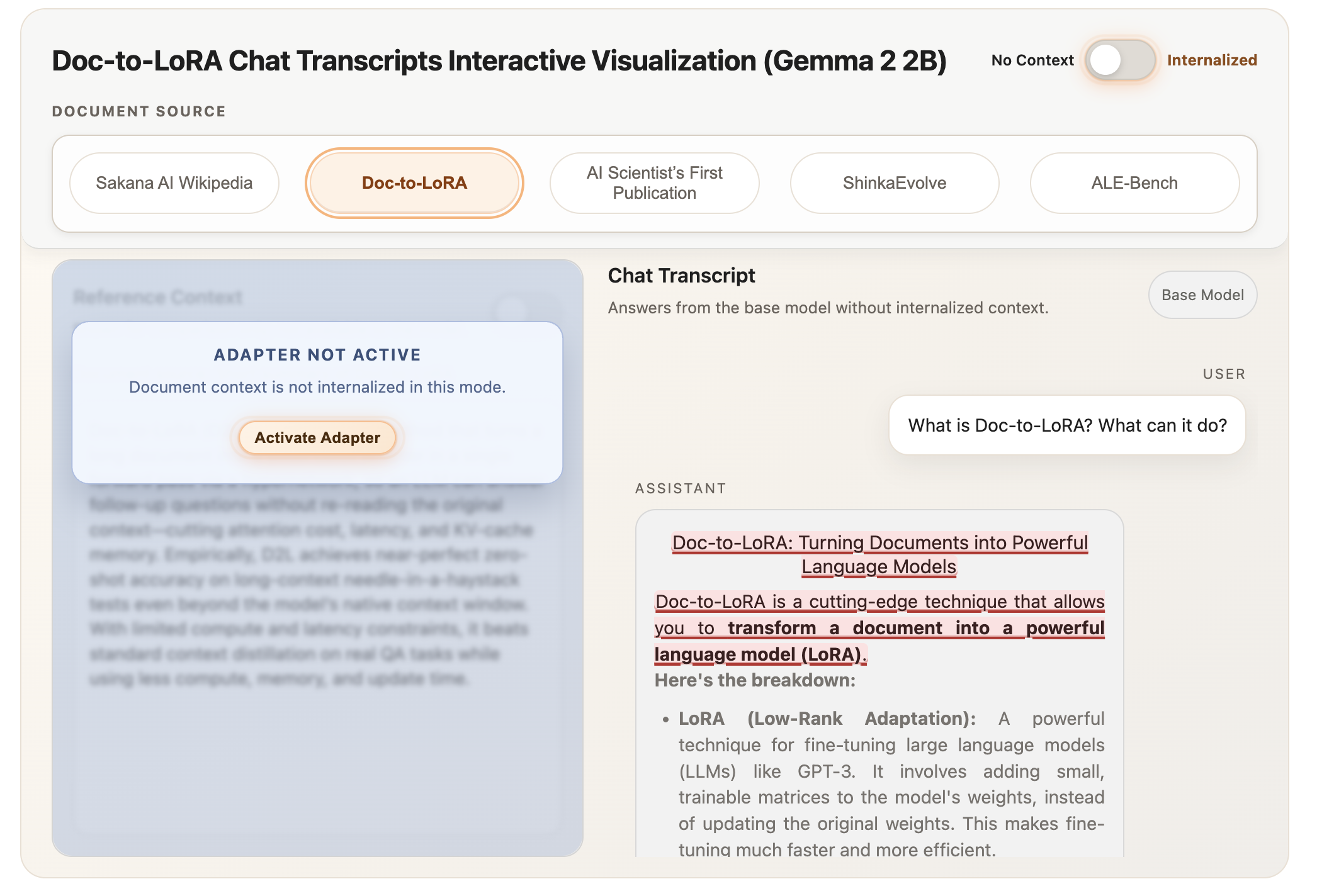

Sakana AI Launches Doc-to-LoRA and Text-to-LoRA: Hypernetworks Quickly Incorporate Long-Term Scenarios and Synchronize LLMs with Zero-Shot Natural Language

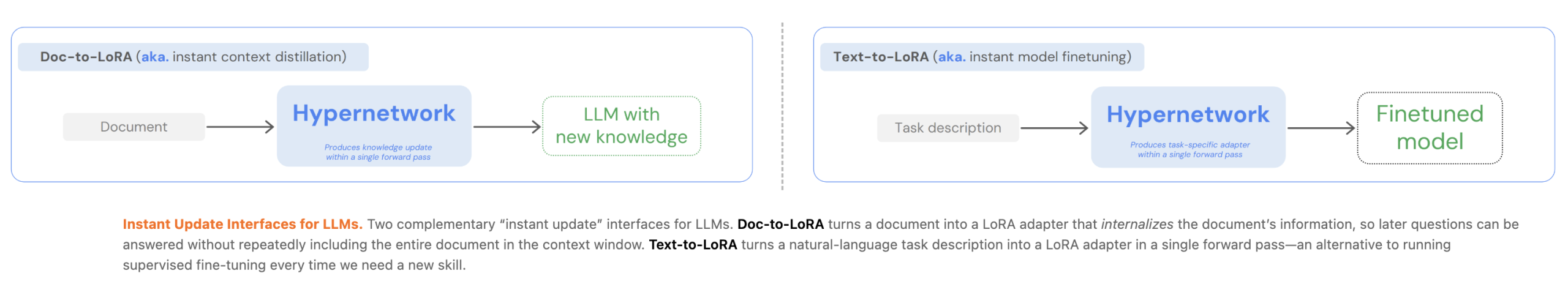

Customization of Large Language Models (LLMs) currently presents an important engineering trade-off between the flexibility of In-Context Learning (ICL) and the efficiency of Context Distillation (CD) or Supervised Fine-Tuning (SFT). Tokyo-based Sakana AI has proposed a new way to overcome these constraints by reducing costs. In their two latest papers, they present Text to LoRA (T2L) again Doc-to-LoRA (D2L)lightweight hypernetworks learn to create Low Level Orientation (LoRA) matrix in one forward pass.

Engineering Bottleneck: Delay vs. Memory

For AI Devs, the main limitation of the typical LLM specialization is computer overhead:

- In-Context Learning (ICL): Although simple, ICL suffers from quadratic and linear attention costs KV-repository growth, which increases latency and memory usage as data expands.

- Context Distillation (CD): CD conveys information on model parameters, but fast individual refinement is often not possible due to high training costs and update delays.

- SFT: It requires task-specific data sets and expensive retraining when information changes.

Sakana AI methods reduce these costs by paying a one-time fee for meta-training. Once trained, the hypernetwork can quickly adapt the LLM base to new tasks or documents without further distribution.

Text-to-LoRA (T2L): Natural Language Practice

Text to LoRA (T2L) is a hypernetwork designed to adapt LLMs on the fly using only the natural language description of the task.

Construction and Training

T2L uses a function encoder to extract vector representations from text descriptions. This representation, combined with a readable module and layer embedding, is processed through a series of MLP blocks to generate A again B target LLM lower level matriculation students.

The program can be trained in two main schemes:

- Reconstruction of LoRA: Filtering existing, pre-trained LoRA adapters in the hypernetwork.

- Supervised Fine-Tuning (SFT): Developing an end-to-end hypernetwork for multitasking datasets.

Research shows that T2L trained in SFT perform better on abstract tasks because they clearly learn to integrate related tasks into a weight space. In benchmarks, the T2L matched or outperformed task-specific adapters in tasks such as GSM8K again The Arc-Challengewhile reducing practice costs by more than 4x compared to 3-shot ICL.

Doc-to-LoRA (D2L): Internal Content

Doc-to-LoRA (D2L) extend this concept to internalize the document. It allows LLM to answer subsequent queries about the document without reusing the original context, effectively removing the document from the active context window.

Industry-Based Design

D2L uses ia Perceiver style cross-attention architecture. It maps variable-length tokenization (Z) from the base LLM to the fixed shape LoRA adapter.

To handle documents that exceed the length of training, D2L uses a chunking mechanism. Long themes are divided into K contiguous chunks, each processed independently to generate adapters with each chunk. These are then aggregated along the scale dimension, allowing D2L to generate high-scale LoRAs for remote inputs without changing the hypernetwork’s output orientation.

Working with Memory Effectively

In a Needle-in-a-Haystack (NIAH) retrieval function, D2L maintained near-zero-shot accuracy at context lengths exceeding the native window of the base model by more than 4x.

- Memory Effect: For a 128K token document, the basic model needs more 12 GB of VRAM for KV cache. Internal D2L models hold the same document using the sub 50 MB.

- Update Delay: D2L encodes information within the microsecond regime (<1s), whereas a traditional CD can take between 40 to 100 seconds.

Cross-Modal Transfer

A key finding in D2L research is the ability to perform internalization of visual information without a gun. By using a Visual Language Model (VLM) as a context encoder, D2L maps visual activation to text-only LLM parameters. This allowed the text model to distinguish images from Imagenette The dataset with 75.03% accuracy.despite never having seen the image data during its main training.

Key Takeaways

- Customizing with Hypernetworks: Both methods use lightweight hypernetworks to learn the adaptation process, paying the cost of one-time meta-training to enable a fast, less than second generation of LoRA adapters for new tasks or documents.

- Significant Memory and Latency Reduction: Doc-to-LoRA embeds context within parameters, reducing KV-cache memory usage from more than 12 GB to less than 50 MB for long documents and reducing update latency from minutes to less than a second.

- Normalization of Active Long Context: Using a Perceiver-based architecture and chunking mechanism, Doc-to-LoRA can embed information over 4x the sequence length of a native LLM window with near-identical accuracy.

- Zero-Shot Task Practice: Text-to-LoRA can generate specialized LoRA adapters for completely abstract tasks based on natural language description only, matching or exceeding the performance of task-specific ‘oracle’ adapters.

- Cross-Modal Information Transfer: The Doc-to-LoRA architecture enables zero-shot integration of visual information from a Vision-Language Model (VLM) into a text-only LLM, allowing the latter to classify images with high accuracy without seeing pixel data during its main training.

Check it out Doc-to-Lora Paper, The code, Text-to-LoRA Paper, The code . Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.