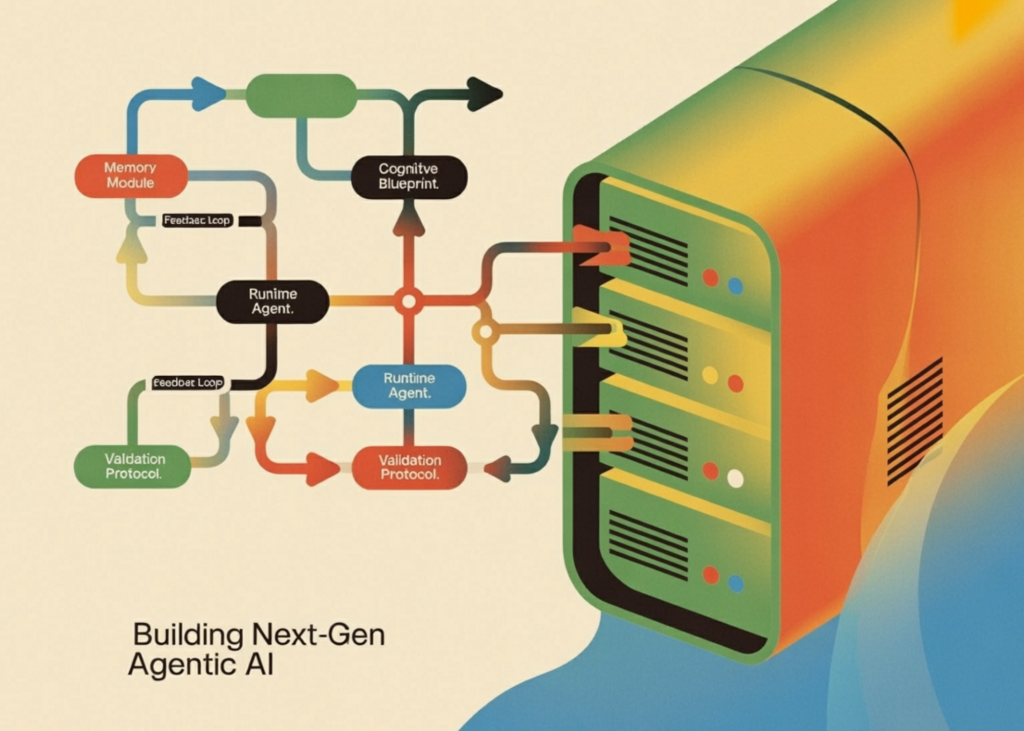

Building Next-Gen Agentic AI: A Complete Framework for Cognitive Blueprint Driven Runtime Agents with Memory and Validation Tools

In this tutorial, we build a complete mental blueprint and framework for a runtime agent. We describe systematic plans for ownership, goals, planning, memory, validation, and access to tools, and use them to create agents that not only respond but also plan, implement, verify, and systematically improve their results. Along the tutorial, we show how the same runtime engine can support multiple agents and behaviors with design portability, making the overall design modular, extensible, and useful for advanced agent AI testing.

import json, yaml, time, math, textwrap, datetime, getpass, os

from typing import Any, Callable, Dict, List, Optional

from dataclasses import dataclass, field

from enum import Enum

from openai import OpenAI

from pydantic import BaseModel

from rich.console import Console

from rich.panel import Panel

from rich.table import Table

from rich.tree import Tree

try:

from google.colab import userdata

OPENAI_API_KEY = userdata.get('OPENAI_API_KEY')

except Exception:

OPENAI_API_KEY = getpass.getpass("🔑 Enter your OpenAI API key: ")

os.environ["OPENAI_API_KEY"] = OPENAI_API_KEY

client = OpenAI(api_key=OPENAI_API_KEY)

console = Console()

class PlanningStrategy(str, Enum):

SEQUENTIAL = "sequential"

HIERARCHICAL = "hierarchical"

REACTIVE = "reactive"

class MemoryType(str, Enum):

SHORT_TERM = "short_term"

EPISODIC = "episodic"

PERSISTENT = "persistent"

class BlueprintIdentity(BaseModel):

name: str

version: str = "1.0.0"

description: str

author: str = "unknown"

class BlueprintMemory(BaseModel):

type: MemoryType = MemoryType.SHORT_TERM

window_size: int = 10

summarize_after: int = 20

class BlueprintPlanning(BaseModel):

strategy: PlanningStrategy = PlanningStrategy.SEQUENTIAL

max_steps: int = 8

max_retries: int = 2

think_before_acting: bool = True

class BlueprintValidation(BaseModel):

require_reasoning: bool = True

min_response_length: int = 10

forbidden_phrases: List[str] = []

class CognitiveBlueprint(BaseModel):

identity: BlueprintIdentity

goals: List[str]

constraints: List[str] = []

tools: List[str] = []

memory: BlueprintMemory = BlueprintMemory()

planning: BlueprintPlanning = BlueprintPlanning()

validation: BlueprintValidation = BlueprintValidation()

system_prompt_extra: str = ""

def load_blueprint_from_yaml(yaml_str: str) -> CognitiveBlueprint:

return CognitiveBlueprint(**yaml.safe_load(yaml_str))

RESEARCH_AGENT_YAML = """

identity:

name: ResearchBot

version: 1.2.0

description: Answers research questions using calculation and reasoning

author: Auton Framework Demo

goals:

- Answer user questions accurately using available tools

- Show step-by-step reasoning for all answers

- Cite the method used for each calculation

constraints:

- Never fabricate numbers or statistics

- Always validate mathematical results before reporting

- Do not answer questions outside your tool capabilities

tools:

- calculator

- unit_converter

- date_calculator

- search_wikipedia_stub

memory:

type: episodic

window_size: 12

summarize_after: 30

planning:

strategy: sequential

max_steps: 6

max_retries: 2

think_before_acting: true

validation:

require_reasoning: true

min_response_length: 20

forbidden_phrases:

- "I don't know"

- "I cannot determine"

"""

DATA_ANALYST_YAML = """

identity:

name: DataAnalystBot

version: 2.0.0

description: Performs statistical analysis and data summarization

author: Auton Framework Demo

goals:

- Compute descriptive statistics for given data

- Identify trends and anomalies

- Present findings clearly with numbers

constraints:

- Only work with numerical data

- Always report uncertainty when sample size is small (< 5 items)

tools:

- calculator

- statistics_engine

- list_sorter

memory:

type: short_term

window_size: 6

planning:

strategy: hierarchical

max_steps: 10

max_retries: 3

think_before_acting: true

validation:

require_reasoning: true

min_response_length: 30

forbidden_phrases: []

"""

We set up a central premise and define a mental blueprint, which organizes how an agent thinks and behaves. We create strongly typed models for ownership, memory configuration, programming strategy, and validation rules using Pydantic and enums. We also describe two YAML-based schemas, which allow us to configure different personalities and agents without changing the underlying runtime system.

@dataclass

class ToolSpec:

name: str

description: str

parameters: Dict[str, str]

function: Callable

returns: str

class ToolRegistry:

def __init__(self):

self._tools: Dict[str, ToolSpec] = {}

def register(self, name: str, description: str,

parameters: Dict[str, str], returns: str):

def decorator(fn: Callable) -> Callable:

self._tools[name] = ToolSpec(name, description, parameters, fn, returns)

return fn

return decorator

def get(self, name: str) -> Optional[ToolSpec]:

return self._tools.get(name)

def call(self, name: str, **kwargs) -> Any:

spec = self._tools.get(name)

if not spec:

raise ValueError(f"Tool '{name}' not found in registry")

return spec.function(**kwargs)

def get_tool_descriptions(self, allowed: List[str]) -> str:

lines = []

for name in allowed:

spec = self._tools.get(name)

if spec:

params = ", ".join(f"{k}: {v}" for k, v in spec.parameters.items())

lines.append(

f"• {spec.name}({params})n"

f" → {spec.description}n"

f" Returns: {spec.returns}"

)

return "n".join(lines)

def list_tools(self) -> List[str]:

return list(self._tools.keys())

registry = ToolRegistry()

@registry.register(

name="calculator",

description="Evaluates a safe mathematical expression",

parameters={"expression": "A math expression string, e.g. '2 ** 10 + 5 * 3'"},

returns="Numeric result as float"

)

def calculator(expression: str) -> str:

try:

allowed = {k: v for k, v in math.__dict__.items() if not k.startswith("_")}

allowed.update({"abs": abs, "round": round, "pow": pow})

return str(eval(expression, {"__builtins__": {}}, allowed))

except Exception as e:

return f"Error: {e}"

@registry.register(

name="unit_converter",

description="Converts between common units of measurement",

parameters={

"value": "Numeric value to convert",

"from_unit": "Source unit (km, miles, kg, lbs, celsius, fahrenheit, liters, gallons, meters, feet)",

"to_unit": "Target unit"

},

returns="Converted value as string with units"

)

def unit_converter(value: float, from_unit: str, to_unit: str) -> str:

conversions = {

("km", "miles"): lambda x: x * 0.621371,

("miles", "km"): lambda x: x * 1.60934,

("kg", "lbs"): lambda x: x * 2.20462,

("lbs", "kg"): lambda x: x / 2.20462,

("celsius", "fahrenheit"): lambda x: x * 9/5 + 32,

("fahrenheit", "celsius"): lambda x: (x - 32) * 5/9,

("liters", "gallons"): lambda x: x * 0.264172,

("gallons", "liters"): lambda x: x * 3.78541,

("meters", "feet"): lambda x: x * 3.28084,

("feet", "meters"): lambda x: x / 3.28084,

}

key = (from_unit.lower(), to_unit.lower())

if key in conversions:

return f"{conversions[key](float(value)):.4f} {to_unit}"

return f"Conversion from {from_unit} to {to_unit} not supported"

@registry.register(

name="date_calculator",

description="Calculates days between two dates, or adds/subtracts days from a date",

parameters={

"operation": "'days_between' or 'add_days'",

"date1": "Date string in YYYY-MM-DD format",

"date2": "Second date for days_between (YYYY-MM-DD), or number of days for add_days"

},

returns="Result as string"

)

def date_calculator(operation: str, date1: str, date2: str) -> str:

try:

d1 = datetime.datetime.strptime(date1, "%Y-%m-%d")

if operation == "days_between":

d2 = datetime.datetime.strptime(date2, "%Y-%m-%d")

return f"{abs((d2 - d1).days)} days between {date1} and {date2}"

elif operation == "add_days":

result = d1 + datetime.timedelta(days=int(date2))

return f"{result.strftime('%Y-%m-%d')} (added {date2} days to {date1})"

return f"Unknown operation: {operation}"

except Exception as e:

return f"Error: {e}"

@registry.register(

name="search_wikipedia_stub",

description="Returns a stub summary for well-known topics (demo — no live internet)",

parameters={"topic": "Topic to look up"},

returns="Short text summary"

)

def search_wikipedia_stub(topic: str) -> str:

stubs = {

"openai": "OpenAI is an AI research company founded in 2015. It created GPT-4 and the ChatGPT product.",

}

for key, val in stubs.items():

if key in topic.lower():

return val

return f"No stub found for '{topic}'. In production, this would query Wikipedia's API."We use a tool registry to allow agents to access and use external capabilities flexibly. We are building a structured system where tools are registered with metadata, including parameters, descriptions, and return values. We also use several practical tools, such as a calculator, unit converter, date calculator, and a Wikipedia search feature that agents can use during execution.

@registry.register(

name="statistics_engine",

description="Computes descriptive statistics on a list of numbers",

parameters={"numbers": "Comma-separated list of numbers, e.g. '4,8,15,16,23,42'"},

returns="JSON with mean, median, std_dev, min, max, count"

)

def statistics_engine(numbers: str) -> str:

try:

nums = [float(x.strip()) for x in numbers.split(",")]

n = len(nums)

mean = sum(nums) / n

sorted_nums = sorted(nums)

mid = n // 2

median = sorted_nums[mid] if n % 2 else (sorted_nums[mid-1] + sorted_nums[mid]) / 2

std_dev = math.sqrt(sum((x - mean) ** 2 for x in nums) / n)

return json.dumps({

"count": n, "mean": round(mean, 4), "median": round(median, 4),

"std_dev": round(std_dev, 4), "min": min(nums),

"max": max(nums), "range": max(nums) - min(nums)

}, indent=2)

except Exception as e:

return f"Error: {e}"

@registry.register(

name="list_sorter",

description="Sorts a comma-separated list of numbers",

parameters={"numbers": "Comma-separated numbers", "order": "'asc' or 'desc'"},

returns="Sorted comma-separated list"

)

def list_sorter(numbers: str, order: str = "asc") -> str:

nums = [float(x.strip()) for x in numbers.split(",")]

nums.sort(reverse=(order == "desc"))

return ", ".join(str(n) for n in nums)

@dataclass

class MemoryEntry:

role: str

content: str

timestamp: float = field(default_factory=time.time)

metadata: Dict = field(default_factory=dict)

class MemoryManager:

def __init__(self, config: BlueprintMemory, llm_client: OpenAI):

self.config = config

self.client = llm_client

self._history: List[MemoryEntry] = []

self._summary: str = ""

def add(self, role: str, content: str, metadata: Dict = None):

self._history.append(MemoryEntry(role=role, content=content, metadata=metadata or {}))

if (self.config.type == MemoryType.EPISODIC and

len(self._history) > self.config.summarize_after):

self._compress_memory()

def _compress_memory(self):

to_compress = self._history[:-self.config.window_size]

self._history = self._history[-self.config.window_size:]

text = "n".join(f"{e.role}: {e.content[:200]}" for e in to_compress)

try:

resp = self.client.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content":

f"Summarize this conversation history in 3 sentences:n{text}"}],

max_tokens=150

)

self._summary += " " + resp.choices[0].message.content.strip()

except Exception:

self._summary += f" [compressed {len(to_compress)} messages]"

def get_messages(self, system_prompt: str) -> List[Dict]:

messages = [{"role": "system", "content": system_prompt}]

if self._summary:

messages.append({"role": "system",

"content": f"[Memory Summary]: {self._summary.strip()}"})

for entry in self._history[-self.config.window_size:]:

messages.append({

"role": entry.role if entry.role != "tool" else "assistant",

"content": entry.content

})

return messages

def clear(self):

self._history = []

self._summary = ""

@property

def message_count(self) -> int:

return len(self._history)We’re expanding the ecosystem of tools and introducing a memory management layer that stores conversation history and compresses it when needed. We use statistical tools and filtering tools that allow data analysis agents to perform systematic numerical operations. At the same time, we design a memory system that tracks interactions, summarizes long histories, and assigns contextual messages to the language model.

@dataclass

class PlanStep:

step_id: int

description: str

tool: Optional[str]

tool_args: Dict[str, Any]

reasoning: str

@dataclass

class Plan:

task: str

steps: List[PlanStep]

strategy: PlanningStrategy

class Planner:

def __init__(self, blueprint: CognitiveBlueprint,

registry: ToolRegistry, llm_client: OpenAI):

self.blueprint = blueprint

self.registry = registry

self.client = llm_client

def _build_planner_prompt(self) -> str:

bp = self.blueprint

return textwrap.dedent(f"""

You are {bp.identity.name}, version {bp.identity.version}.

{bp.identity.description}

## Your Goals:

{chr(10).join(f' - {g}' for g in bp.goals)}

## Your Constraints:

{chr(10).join(f' - {c}' for c in bp.constraints)}

## Available Tools:

{self.registry.get_tool_descriptions(bp.tools)}

## Planning Strategy: {bp.planning.strategy}

## Max Steps: {bp.planning.max_steps}

Given a user task, produce a JSON execution plan with this exact structure:

{{

"steps": [

{{

"step_id": 1,

"description": "What this step does",

"tool": "tool_name or null if no tool needed",

"tool_args": {{"arg1": "value1"}},

"reasoning": "Why this step is needed"

}}

]

}}

Rules:

- Only use tools listed above

- Set tool to null for pure reasoning steps

- Keep steps <= {bp.planning.max_steps}

- Return ONLY valid JSON, no markdown fences

{bp.system_prompt_extra}

""").strip()

def plan(self, task: str, memory: MemoryManager) -> Plan:

system_prompt = self._build_planner_prompt()

messages = memory.get_messages(system_prompt)

messages.append({"role": "user", "content":

f"Create a plan to complete this task: {task}"})

resp = self.client.chat.completions.create(

model="gpt-4o-mini", messages=messages,

max_tokens=1200, temperature=0.2

)

raw = resp.choices[0].message.content.strip()

raw = raw.replace("```json", "").replace("```", "").strip()

data = json.loads(raw)

steps = [

PlanStep(

step_id=s["step_id"], description=s["description"],

tool=s.get("tool"), tool_args=s.get("tool_args", {}),

reasoning=s.get("reasoning", "")

)

for s in data["steps"]

]

return Plan(task=task, steps=steps, strategy=self.blueprint.planning.strategy)

@dataclass

class StepResult:

step_id: int

success: bool

output: str

tool_used: Optional[str]

error: Optional[str] = None

@dataclass

class ExecutionTrace:

plan: Plan

results: List[StepResult]

final_answer: str

class Executor:

def __init__(self, blueprint: CognitiveBlueprint,

registry: ToolRegistry, llm_client: OpenAI):

self.blueprint = blueprint

self.registry = registry

self.client = llm_clientWe use a programming system that transforms the user’s work into a structured application made up of multiple steps. We design a programmer that teaches a language model to generate a JSON program that contains logic, tool selection, and arguments for each step. This planning layer allows the agent to break down complex problems into small actionable actions before executing them.

def execute_plan(self, plan: Plan, memory: MemoryManager,

verbose: bool = True) -> ExecutionTrace:

results: List[StepResult] = []

if verbose:

console.print(f"n[bold yellow]⚡ Executing:[/] {plan.task}")

console.print(f" Strategy: {plan.strategy} | Steps: {len(plan.steps)}")

for step in plan.steps:

if verbose:

console.print(f"n [cyan]Step {step.step_id}:[/] {step.description}")

try:

if step.tool and step.tool != "null":

if verbose:

console.print(f" 🔧 Tool: [green]{step.tool}[/] | Args: {step.tool_args}")

output = self.registry.call(step.tool, **step.tool_args)

result = StepResult(step.step_id, True, str(output), step.tool)

if verbose:

console.print(f" ✅ Result: {output}")

else:

context_text = "n".join(

f"Step {r.step_id} result: {r.output}" for r in results)

prompt = (

f"Previous results:n{context_text}nn"

f"Now complete this step: {step.description}n"

f"Reasoning hint: {step.reasoning}"

) if context_text else (

f"Complete this step: {step.description}n"

f"Reasoning hint: {step.reasoning}"

)

sys_prompt = (

f"You are {self.blueprint.identity.name}. "

f"{self.blueprint.identity.description}. "

f"Constraints: {'; '.join(self.blueprint.constraints)}"

)

resp = self.client.chat.completions.create(

model="gpt-4o-mini",

messages=[

{"role": "system", "content": sys_prompt},

{"role": "user", "content": prompt}

],

max_tokens=500, temperature=0.3

)

output = resp.choices[0].message.content.strip()

result = StepResult(step.step_id, True, output, None)

if verbose:

preview = output[:120] + "..." if len(output) > 120 else output

console.print(f" 🤔 Reasoning: {preview}")

except Exception as e:

result = StepResult(step.step_id, False, "", step.tool, str(e))

if verbose:

console.print(f" ❌ Error: {e}")

results.append(result)

final_answer = self._synthesize(plan, results, memory)

return ExecutionTrace(plan=plan, results=results, final_answer=final_answer)

def _synthesize(self, plan: Plan, results: List[StepResult],

memory: MemoryManager) -> str:

steps_summary = "n".join(

f"Step {r.step_id} ({'✅' if r.success else '❌'}): {r.output[:300]}"

for r in results

)

synthesis_prompt = (

f"Original task: {plan.task}nn"

f"Step results:n{steps_summary}nn"

f"Provide a clear, complete final answer. Integrate all step results."

)

sys_prompt = (

f"You are {self.blueprint.identity.name}. "

+ ("Always show your reasoning. " if self.blueprint.validation.require_reasoning else "")

+ f"Goals: {'; '.join(self.blueprint.goals)}"

)

messages = memory.get_messages(sys_prompt)

messages.append({"role": "user", "content": synthesis_prompt})

resp = self.client.chat.completions.create(

model="gpt-4o-mini", messages=messages,

max_tokens=600, temperature=0.3

)

return resp.choices[0].message.content.strip()

@dataclass

class ValidationResult:

passed: bool

issues: List[str]

score: float

class Validator:

def __init__(self, blueprint: CognitiveBlueprint, llm_client: OpenAI):

self.blueprint = blueprint

self.client = llm_client

def validate(self, answer: str, task: str,

use_llm_check: bool = False) -> ValidationResult:

issues = []

v = self.blueprint.validation

if len(answer) < v.min_response_length:

issues.append(f"Response too short: {len(answer)} chars (min: {v.min_response_length})")

answer_lower = answer.lower()

for phrase in v.forbidden_phrases:

if phrase.lower() in answer_lower:

issues.append(f"Forbidden phrase detected: '{phrase}'")

if v.require_reasoning:

indicators = ["because", "therefore", "since", "step", "first",

"result", "calculated", "computed", "found that"]

if not any(ind in answer_lower for ind in indicators):

issues.append("Response lacks visible reasoning or explanation")

if use_llm_check:

issues.extend(self._llm_quality_check(answer, task))

return ValidationResult(passed=len(issues) == 0,

issues=issues,

score=max(0.0, 1.0 - len(issues) * 0.25))

def _llm_quality_check(self, answer: str, task: str) -> List[str]:

prompt = (

f"Task: {task}nnAnswer: {answer[:500]}nn"

f'Does this answer address the task? Reply JSON: {{"on_topic": true/false, "issue": "..."}}'

)

try:

resp = self.client.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}],

max_tokens=100

)

raw = resp.choices[0].message.content.strip().replace("```json","").replace("```","")

data = json.loads(raw)

if not data.get("on_topic", True):

return [f"LLM quality check: {data.get('issue', 'off-topic')}"]

except Exception:

pass

return []We built the inheritor and validation logic that executes the actions generated by the editor. We use a system that can call registered tools or think about a language model, depending on the definition of the step. We also add a validator that checks the final response against plan constraints such as minimum height, logic requirements, and forbidden phrases.

@dataclass

class AgentResponse:

agent_name: str

task: str

final_answer: str

trace: ExecutionTrace

validation: ValidationResult

retries: int

total_steps: int

class RuntimeEngine:

def __init__(self, blueprint: CognitiveBlueprint,

registry: ToolRegistry, llm_client: OpenAI):

self.blueprint = blueprint

self.memory = MemoryManager(blueprint.memory, llm_client)

self.planner = Planner(blueprint, registry, llm_client)

self.executor = Executor(blueprint, registry, llm_client)

self.validator = Validator(blueprint, llm_client)

def run(self, task: str, verbose: bool = True) -> AgentResponse:

bp = self.blueprint

if verbose:

console.print(Panel(

f"[bold]Agent:[/] {bp.identity.name} v{bp.identity.version}n"

f"[bold]Task:[/] {task}n"

f"[bold]Strategy:[/] {bp.planning.strategy} | "

f"Max Steps: {bp.planning.max_steps} | "

f"Max Retries: {bp.planning.max_retries}",

title="🚀 Runtime Engine Starting", border_style="blue"

))

self.memory.add("user", task)

retries, trace, validation = 0, None, None

for attempt in range(bp.planning.max_retries + 1):

if attempt > 0 and verbose:

console.print(f"n[yellow]⟳ Retry {attempt}/{bp.planning.max_retries}[/]")

console.print(f" Issues: {', '.join(validation.issues)}")

if verbose:

console.print("n[bold magenta]📋 Phase 1: Planning...[/]")

try:

plan = self.planner.plan(task, self.memory)

if verbose:

tree = Tree(f"[bold]Plan ({len(plan.steps)} steps)[/]")

for s in plan.steps:

icon = "🔧" if s.tool else "🤔"

branch = tree.add(f"{icon} Step {s.step_id}: {s.description}")

if s.tool:

branch.add(f"[green]Tool:[/] {s.tool}")

branch.add(f"[yellow]Args:[/] {s.tool_args}")

console.print(tree)

except Exception as e:

if verbose: console.print(f"[red]Planning failed:[/] {e}")

break

if verbose:

console.print("n[bold magenta]⚡ Phase 2: Executing...[/]")

trace = self.executor.execute_plan(plan, self.memory, verbose=verbose)

if verbose:

console.print("n[bold magenta]✅ Phase 3: Validating...[/]")

validation = self.validator.validate(trace.final_answer, task)

if verbose:

status = "[green]PASSED[/]" if validation.passed else "[red]FAILED[/]"

console.print(f" Validation: {status} | Score: {validation.score:.2f}")

for issue in validation.issues:

console.print(f" ⚠️ {issue}")

if validation.passed:

break

retries += 1

self.memory.add("assistant", trace.final_answer)

self.memory.add("user",

f"Your previous answer had issues: {'; '.join(validation.issues)}. "

f"Please improve."

)

if trace:

self.memory.add("assistant", trace.final_answer)

if verbose:

console.print(Panel(

trace.final_answer if trace else "No answer generated",

title=f"🎯 Final Answer — {bp.identity.name}",

border_style="green"

))

return AgentResponse(

agent_name=bp.identity.name, task=task,

final_answer=trace.final_answer if trace else "",

trace=trace, validation=validation,

retries=retries,

total_steps=len(trace.results) if trace else 0

)

def reset_memory(self):

self.memory.clear()

def build_engine(blueprint_yaml: str, registry: ToolRegistry,

llm_client: OpenAI) -> RuntimeEngine:

return RuntimeEngine(load_blueprint_from_yaml(blueprint_yaml), registry, llm_client)

if __name__ == "__main__":

print("n" + "="*60)

print("DEMO 1: ResearchBot")

print("="*60)

research_engine = build_engine(RESEARCH_AGENT_YAML, registry, client)

research_engine.run(

task=(

"how many steps of 20cm height would that be? Also, if I burn 0.15 "

"calories per step, what's the total calorie burn? Show all calculations."

)

)

print("n" + "="*60)

print("DEMO 2: DataAnalystBot")

print("="*60)

analyst_engine = build_engine(DATA_ANALYST_YAML, registry, client)

analyst_engine.run(

task=(

"Analyze this dataset of monthly sales figures (in thousands): "

"142, 198, 173, 155, 221, 189, 203, 167, 244, 198, 212, 231. "

"Compute key statistics, identify the best and worst months, "

"and calculate growth from first to last month."

)

)

print("n" + "="*60)

print("PORTABILITY DEMO: Same task → 2 different blueprints")

print("="*60)

SHARED_TASK = "Calculate 15% of 2,500 and tell me the result."

responses = {}

for name, yaml_str in [

("ResearchBot", RESEARCH_AGENT_YAML),

("DataAnalystBot", DATA_ANALYST_YAML),

]:

eng = build_engine(yaml_str, registry, client)

responses[name] = eng.run(SHARED_TASK, verbose=False)

table = Table(title="🔄 Blueprint Portability", show_header=True, show_lines=True)

table.add_column("Agent", style="cyan", width=18)

table.add_column("Steps", style="yellow", width=6)

table.add_column("Valid?", width=7)

table.add_column("Score", width=6)

table.add_column("Answer Preview", width=55)

for name, r in responses.items():

table.add_row(

name, str(r.total_steps),

"✅" if r.validation.passed else "❌",

f"{r.validation.score:.2f}",

r.final_answer[:140] + "..."

)

console.print(table)We integrate a runtime engine that organizes planning, execution, memory updates, and validation into a fully automated workflow. We do several demonstrations that show how different architectures produce different behavior while using the same core architecture. Finally, we demonstrate the feasibility of the plan by applying the same task to two agents and comparing their results.

In conclusion, we have created a fully functional Auton-style runtime system that integrates schemas, device registration, memory management, programming, execution, and validation into a unified framework. We showed how different agents can share the same underlying architecture while behaving differently with customized blueprints, highlighting design flexibility and capabilities. With this implementation, we not only explored how runtime agents work but also built a solid foundation that can be further extended with richer tools, more robust memory systems, and more advanced autonomous behavior.

Check it out Full Codes here again Related Paper. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.