Artificial Data: How Human Expertise Makes Scale Usable for AI

AI teams are under constant pressure to move faster. They need more data, more diversity, and broader coverage across contexts, languages, and formats. This is one of the reasons synthetic data is so attractive: it helps teams generate training data at a speed that only a set of hands can often match.

But there is a catch. Artificial data can increase volume quickly, but volume itself does not guarantee value. If the samples generated are inaccurate, poorly bound, or poorly validated, teams can end up amplifying noise instead of signal.

This is where supervised artificial data comes in. It combines machine-generated scale with human judgment, review, and quality control so that your output is not just bigger, but better.

Why synthetic data is getting attention now

For many groups, the barrier is no longer access to the model. It is data readiness. They need data sets that are broad enough to cover rare cases, structured enough to support fine-tuning, and reliable enough to be reliable in production.

Synthetic data is useful because it can fill gaps, simulate conditions that are difficult to capture, and reduce reliance on expensive or privacy-sensitive collection workflows. At the same time, governance and moderation are still important. Frameworks such as the NIST AI Risk Management Framework emphasize reliability, testing, and risk assessment throughout the AI lifecycle (Source: NIST, 2024).

What artificial data monitoring means in practice

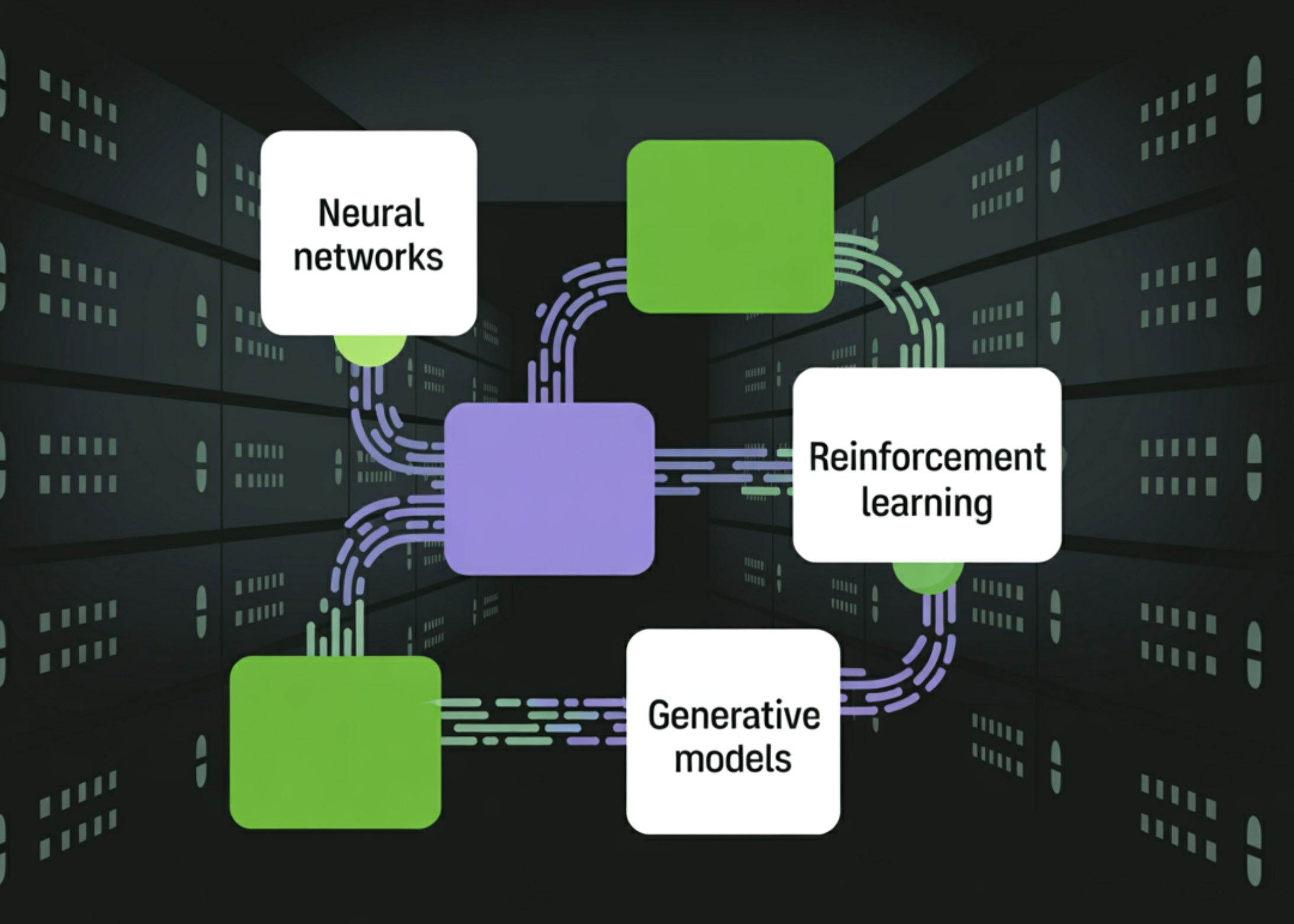

At a basic level, synthetic data is artificially generated data designed to display patterns, structures, or conditions needed for model training and testing.

Supervised synthetic data adds another layer: people define what “good” looks like before, during, and after a generation. They shape the instructions, clarify cases, review uncertain results, and verify whether the data actually improves the model’s results.

Think of it as a flight simulator with an instructor. The simulator provides scale and replication. The instructor ensures that the pilot learns proper behavior instead of practicing mistakes. Synthetic data works the same way. Generation gives you speed. Human guidance keeps that speed in the right direction.

Comparison table — synthetic only vs. monitored synthetic pipelines vs. standard human-labeled pipelines

The table shows why supervised synthetic data is so attractive. It preserves most of the economies of scale of production while reducing the drift in quality that pure automation can introduce.

When the work flow is purely passive it tends to be sloppy

The first problem is literally. Produced examples may look sound but miss subtle patterns that are important to production.

The second problem is edge cases. Rare situations are often the reason for teams to access synthetic data, however those situations are easy to oversimplify unless domain experts practice.

The third problem is testing. Many teams ask, “How much data did we generate?” before asking, “Does this data improve the model?” NIST’s work on AI testing, testing, verification, and validation highlights the importance of measurable testing and evaluating performance that is relevant to context, not just volume of output (Source: NIST, 2025). See the NIST guidance for TEVV.

An operational model for high-quality synthetic data

Robust supervised artificial intelligence systems often begin with project design, not production. That means clear instructions, labeled examples, step-by-step descriptions, and an agreed-upon quality rubric.

Next comes the smart verifiers. These early avoidable problems: duplicates, missing fields, incorrect answers, obvious contradictions, profanity, or formatting failure. That way, human reviewers spend time judging rather than cleaning.

Then comes the selected human review. Not all samples require professional attention. But obscure, high-risk, or background-sensitive things often do. This is where experienced reviewers can improve consistency and prevent the failure of a silent data set.

Finally, the best teams close the loop. They use gold data, benchmark sets, and underlying model performance to see if synthetic data really helps. That operational discipline reflects Shaip’s emphasis on expert data annotation, AI data platforms with quality control, and productive AI training data workflows.

What does this look like in the real world

Consider a team building a support assistant for a specialized industry. They produce thousands of prototypes in a few days and feel good about the output. On paper, the data set looks unique. In testing, however, the model suffers from vague claims, unfamiliar terms, and exceptions to the rule.

Why? Because the generated data captured the general trend, but not the dirty conditions of the real world.

The team then redesigned the workflow. They strengthen the instructions, add examples of borderline cases, introduce validators of common formatting errors, and send uncertain samples to domain reviewers. They also create a small gold dataset for benchmarking before a new batch is accepted.

The result is not just more data. It is very reliable data.

A decision framework for responsible use of synthetic data

Use synthetic data when you need scale, increased privacy awareness, rare event coverage, or rapid replication.

Supplement with real-world data if the task relies heavily on authentic behavior, live broadcasts, or ambiguities that are difficult to simulate.

Before weighing, ask three practical questions:

- What failure would be most damaging if this data were incorrect?

- Which samples can be validated automatically, and which ones require human judgment?

- What benchmark will prove that the new data has improved the model?

If those questions don’t have clear answers, the pipeline probably isn’t ready to scale.

The conclusion

Synthetic data is more important if it is considered a quality system, not a content industry. Machine production can provide speed and scope, but it is human technology that turns that scale into something practical.

The teams that get the most from synthetic data aren’t the ones that generate the most lines. They are the ones who build the tightest review loops, verifiers, benchmarks, and decision rules around it.