How to build a “humble” AI | MIT News

Artificial intelligence holds the promise of helping doctors identify patients and personalize treatment options. However, an international group of scientists led by MIT warns that AI systems, as currently designed, carry the risk of leading doctors in the wrong direction because they may make overly incorrect decisions.

One way to prevent these errors is to design AI systems to be “humble,” according to the researchers. Such systems will indicate when they are uncertain about a diagnosis or their recommendations and can encourage users to gather additional information when the diagnosis is uncertain.

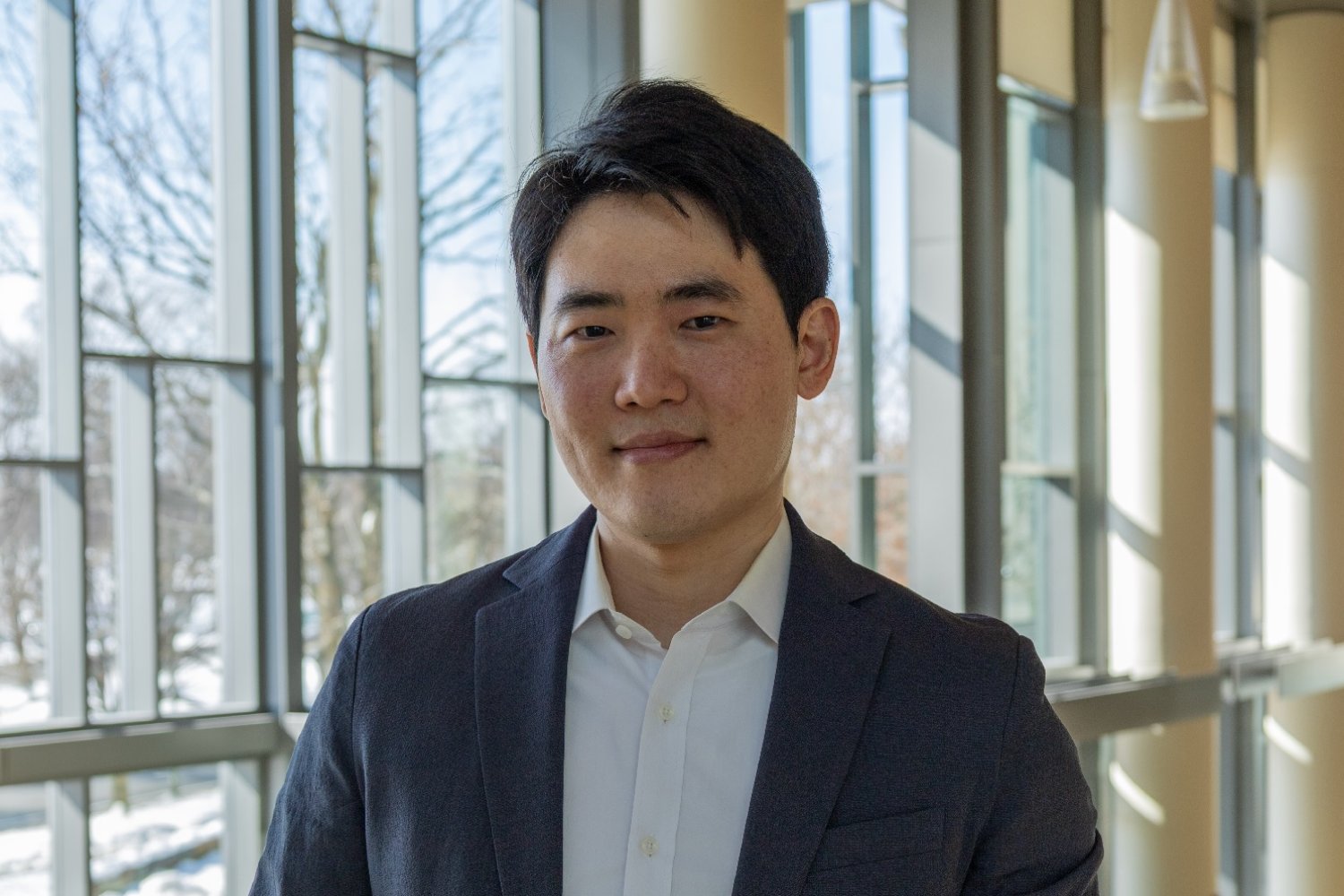

“Now we use AI as an oracle, but we can use AI as a coach. We can use AI as a true driver of truth. That will not only increase our ability to retrieve information but increase our agency to be able to connect the dots,” said Leo Anthony Celi, senior research scientist at MIT’s Institute for Medical Engineering and Science, physician at Beth Israel Deaconess Medical Center Harvard Medical Center.

Celi and his colleagues have created a framework that they say can guide AI developers in designing systems that exhibit curiosity and humility. This new approach could allow doctors and AI systems to work as partners, the researchers say, and help prevent AI from having too much influence on doctors’ decisions.

Celi is the senior author of the study, which appears today on BMJ Health and Care Informatics. The paper’s lead author is Sebastián Andrés Cajas Ordoñez, a researcher at MIT Critical Data, a global consortium led by the Laboratory for Computational Physiology within the MIT Institute for Medical Engineering and Science.

Inculcating human values

Overconfident AI systems can lead to errors in medical settings, according to the MIT team. Previous studies have found that ICU physicians defer to AI systems they perceive as reliable even when their intuition contradicts the AI’s recommendation. Doctors and patients alike are more likely to accept negative AI recommendations if they are perceived as authoritative.

Instead of systems that provide overconfident but potentially incorrect advice, healthcare facilities should have access to AI systems that work more collaboratively with nurses, researchers say.

“We are trying to include people in these human-AI systems, to enable people to think together and rethink, instead of having different AI agents doing everything. We want people to be more creative by using AI,” said Cajas Ordoñez.

To build such a system, the consortium has developed a framework that includes several computational modules that can be incorporated into existing AI systems. The first of these modules requires an AI model to test its confidence when making diagnostic predictions. Developed by consortium members Janan Arslan and Kurt Benke of the University of Melbourne, the Epistemic Virtue Score serves as a self-awareness check, ensuring that system confidence is appropriately influenced by the uncertainty and complexity of each clinical situation.

With that local awareness, the model can adjust its response to the situation. If the system finds that its confidence exceeds what is supported by the available evidence, it can pause and flag discrepancies, request a specific test or history that can resolve the uncertainty, or recommend consultation with a specialist. The goal is that AI not only provides answers but also indicates when those answers should be treated with caution.

“It’s like having a co-driver who can tell you that you need to get a new set of eyes to better understand this complex patient,” said Celi.

Celi and his colleagues have developed large databases that can be used to train AI systems, including the Medical Information Mart for Intensive Care (MIMIC) database from Beth Israel Deaconess Medical Center. His team is now working to apply the new framework to MIIC-based AI systems and introduce it to nurses at the Beth Israel Lahey Health system.

The method could also be used in AI systems used to analyze X-ray images or determine the best treatment options for patients in the emergency room, among others, the researchers said.

Towards a more engaging AI

This research is part of a larger effort by Celi and his colleagues to create AI programs designed for the people who will ultimately be most affected by these tools. Many AI models, such as MIIC, are trained on publicly available data from the United States, which can lead to the introduction of a bias towards a particular way of thinking about medical issues, and to the exclusion of others.

Bringing in more perspectives is essential to overcoming this potential bias, Celi said, stressing that each member of the global consortium brings a unique perspective to a broader, collective understanding.

Another problem with existing AI systems used for diagnosis is that they are often trained on electronic health records, which were not originally intended for that purpose. This means that the data does not contain much context that would be useful in making diagnoses and treatment recommendations. Additionally, many patients are excluded from those datasets due to lack of access, such as people living in rural areas.

In data conferences hosted by MIT Critical Data, teams of data scientists, healthcare professionals, social scientists, patients, and others work together to design new AI systems. Before starting, everyone is asked to assume that the data they are using captures all the drivers of whatever they are trying to predict, and to ensure that they are not inappropriately including structural imbalances in their models.

“We have them question the dataset. Are they confident in their training data and validation data? Do they think there are patients left out, unintentionally or intentionally, and how will that affect the model itself?” you prune. “Of course, we will not stop or slow down the growth of AI, not just in healthcare, but in all sectors. But, we must be deliberate and considerate in how we do this.”

The study was funded by the Boston-Korea Innovative Research Project through the Korea Health Industry Development Institute.