Luma Labs Introduces Uni-1: An Autoregressive Transformer Model That Defines Intentions Before Imaging

In the field of AI-generated media, the industry is shifting from probabilistic pixel synthesis to models capable of structural reasoning. Luma Labs recently released Uni-1a basic image model designed to deal with ‘objective gapBy implementing a pre-production thinking phase, Uni-1 changes the workflow from ‘engineering’ to the next order.

Architecture: Decoder-Only Autoregressive Transformers

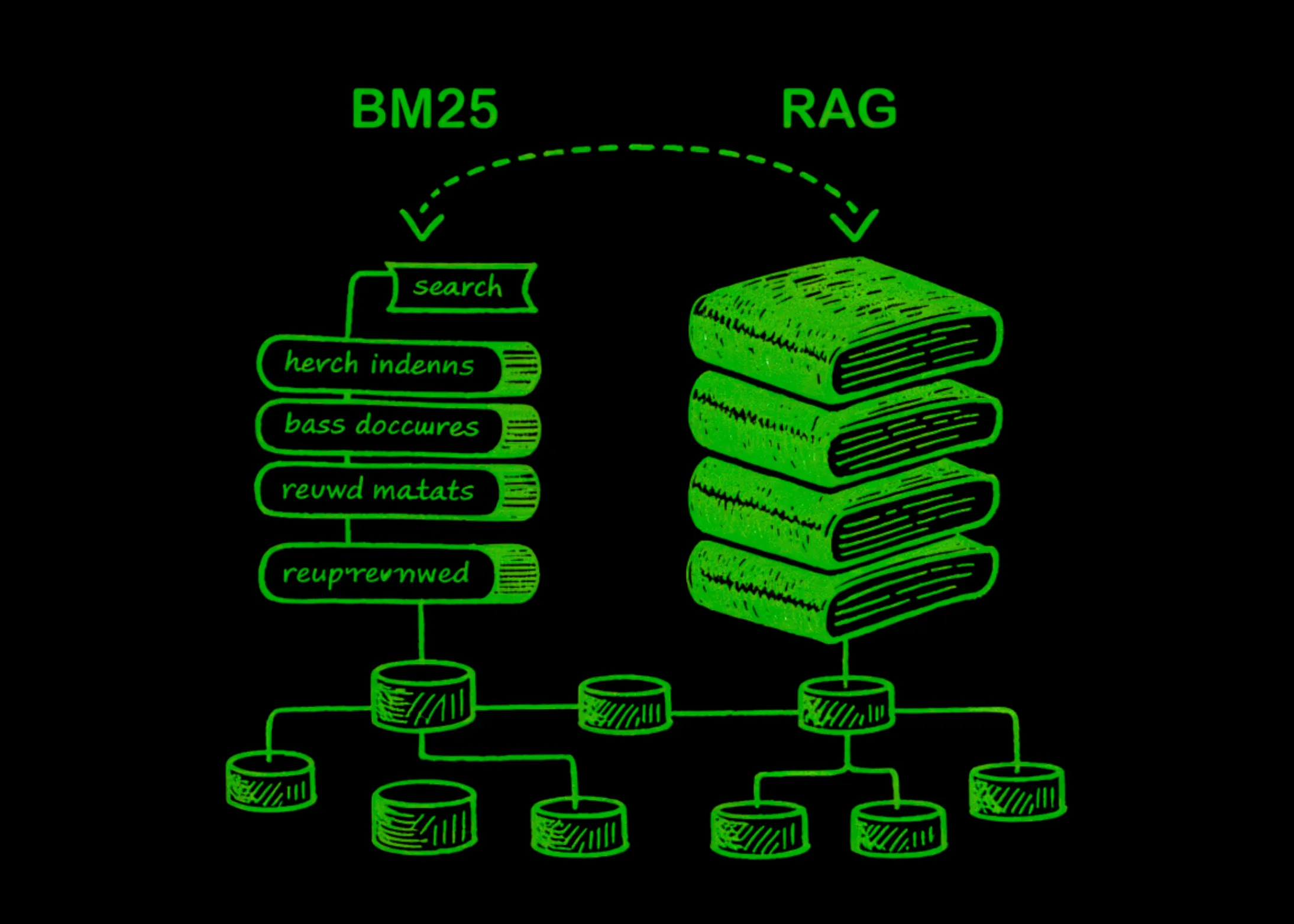

While popular models such as Stable Diffusion or Flux dependent diffusion potential models (DDPMs), Uni-1 uses decoder-only autoregressive transformer properties. This change is technically important because it allows the model to treat text and images as the remaining sequence of tokens.

In this structure, the images are divided into visible physical tokens. The model predicts the next token in the sequence, regardless of whether that token is a word or a physical object. This creates a feedback loop in which the model can communicate with the script by predicting the spatial structure of the logic before producing the final high-resolution data.

Key Technical Features:

- Unified Intelligence: The model does both understanding and production within the same forward pass.

- Entry Tokens: By processing textual and visual data in a single stream, the model maintains a high level of contextual awareness of spatial relationships.

- Spatial Logic: Unlike distribution models that can be difficult with ‘left/right’ or ‘back/bottom’ due to latent space limitations, Uni-1 organizes compositional geometry as part of its sequence prediction.

Benchmarking Reasoning: RISEBench and ODinW-13

To validate the ‘Consultation Before Production’ approach, Luma Labs tested the Uni-1 against industry benchmarks that prioritize logic over aesthetics. The results show that Uni-1 is currently leading in the popularity ratings compared to it Flux Max again Gemini.

Data scientists should note Uni-1’s performance in two specific benchmarks:

| Benchmark | The focal point | Uni-1 performance |

| RISEBench | Informative Visual Editing | High accuracy in spatial reasoning and handling logical constraints. |

| ODinW-13 | Open Discovery in the Wild | The only cognitive variety that works best, productivity improves visual comprehension. |

Operation is on ODinW-13 it is especially notable for AI researchers. It suggests that a model trained to produce pixels by autoregression develop a more robust internal representation for object detection and classification than models trained only for computer vision tasks.

Using Uni-1: Plain English and API Access

The user experience (UX) of Uni-1 is designed to minimize the need for rapid engineering. Because the model causes with intentions, it accepts clear English instructions.

- Current Availability: Access is live at lumalabs.ai/uni-1.

- Cost Basis: Almost $0.10 per image. This shows the high computational overhead required for an automatic think-first model compared to lightweight distribution models.

- API roadmap: Luma confirmed that API access is coming. This will allow developers to integrate Uni-1’s spatial thinking into automated creative pipelines, such as dynamic UI generation or game asset development.

Key Takeaways

- Architectural Shift: Uni-1 goes from normal distribution pipes to decoder-only autoregressive transformertreats text and pixels as one the remaining sequence of tokens integrating understanding and generation.

- Realing-First Synthesis: The model is playing systematic internal thinking again local logic before handing over, allowing it to use complex structures from plain English commands without rapid engineering.

- SOTA Benchmarks: It leads popular rankings against competitors like Flux Max and sets new performance standards RISEBench (Visual Programming with Informed Thinking) and ODinW-13 (Turn on Wild Discovery).

- Productivity Consistency: Designed for high reliability workflows, the model excels in maintenance preservation of identity character sheets and salary conversions drawings it becomes a polished art with structural precision.

- Developer Access: It is available now for future web users API releasethe Uni-1 has a value of approx $0.10 per imagepositioning it as the leading engine for high-precision creative applications.

Check it out Technical details here. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Michal Sutter is a data science expert with a Master of Science in Data Science from the University of Padova. With a strong foundation in statistical analysis, machine learning, and data engineering, Michal excels at turning complex data sets into actionable insights.