New Meta AI Hyperagents Don’t Just Solve Tasks—They Rewrite the Rules of How They Learn

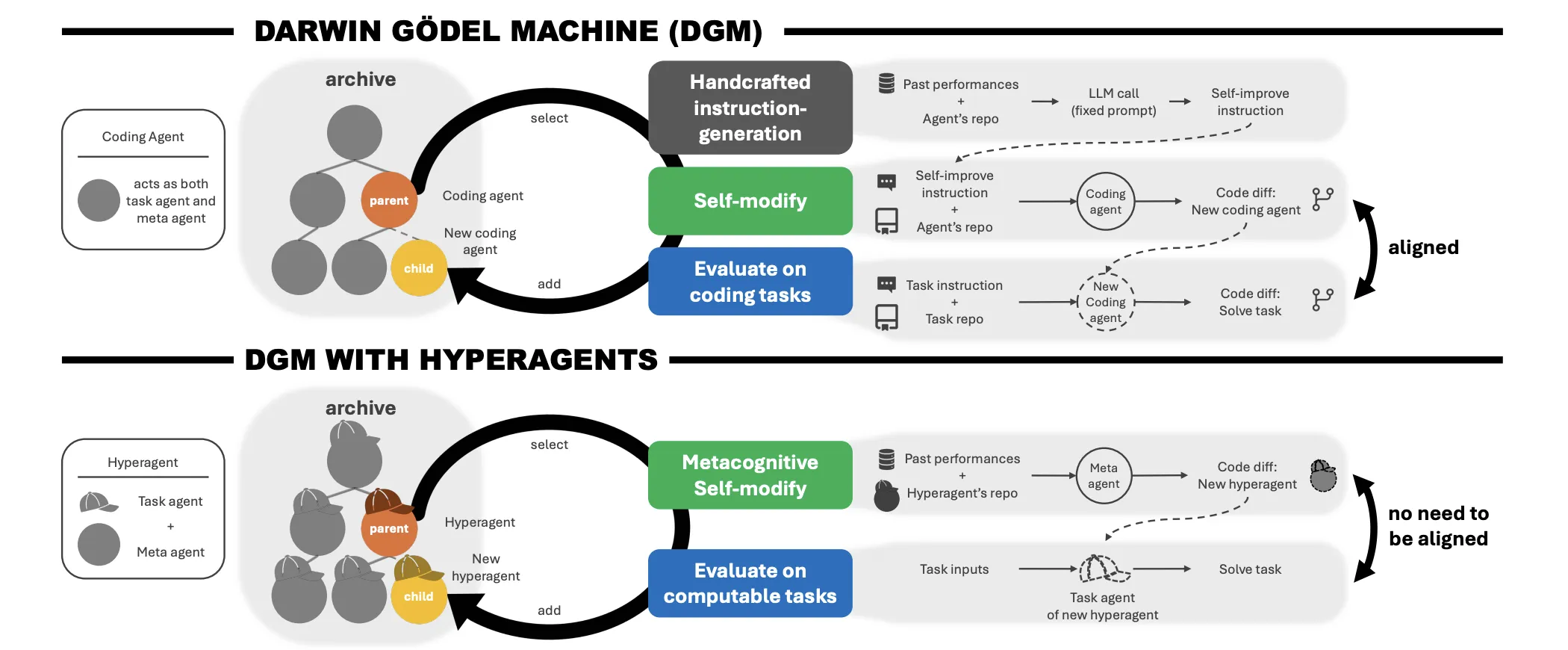

The dream of iterative self-improvement in AI—where the system doesn’t just get better at the job, but gets better reading-It has long been the ‘holy land’ of the field. While theoretical models such as Filling Machine have been around for decades, they have remained largely ineffective in real-world settings. That changed with Darwin Gödel Machine (DGM)which proved that open source development was achievable in coding.

However, DGM faced a significant obstacle: it relied on a manual meta-level mechanism to generate optimization instructions. This limited the growth of the system to human-designed meta parameters. Researchers from University of British Columbia, Vector Institute, University of Edinburgh, New York University, Canada CIFAR AI Chair, FAIR at Meta, and Meta Superintelligence Labs they are quiet Hyperagents. This framework streamlines the meta-level transformation process itself, eliminating the assumption that job performance and self-transformation skills must be domain-specific.

Problem: The Infinite Regress of Meta-Levels

The problem with existing self-improving systems is often ‘permanent regression’. If you have work agent (part that solves the problem) and a a meta agent (the part that develops the task agent), who develops the meta agent?. Adding a ‘meta-meta’ layer simply turns the problem upside down.

In addition, earlier systems relied on alignment between the task and the development process. In coding, getting better at the job often translates into getting better at fixing it yourself. But in non-coding domains—like poetry or robotics—improving the ability to solve tasks does not necessarily mean improving the ability to analyze and modify source code..

Hyperagents: One Programmable System

I DGM-Hyperagent (DGM-H) the framework addresses this by combining the task agent and the meta agent into a single, self-directed, and fully configurable system.. In this architecture, an agent is defined as any executable program that can integrate base model (FM) calls with external tools..

Because the meta agent is part of the same programmable codebase as the task agent, it can rewrite its own maintenance routines. The research team calls this metacognitive change. A hyperagent doesn’t just want a better solution; develops a responsible way of making future improvements.

Comparison of Self-Development Structures

| Element | Darwin Gödel Machine (DGM) | DGM with Hyperagents (DGM-H) |

| Meta-level Mechanism | Refined and handcrafted | It is fully editable and configurable |

| Domain Alignment | Required (especially coding) | Not required (any physical activity) |

| Type of Switch | Job level only | Metacognitive (task + meta) |

Results: Beyond Local Optima in Robotics and Review

The research team tested the DGM-H across different domains: coding, paper review, robotic reward design, and an Olympiad-level math grade.

In robotics reward designhyperagent was tasked with designing Python reward functions to train a quadruple robot Genesis simulator. During the training phase, agents had to design rewards for moving forward. For the delayed test, the agents had to subtract zero and generate rewards for a different task: increasing the height of the robot’s body..

DGM-H significantly improved performance, increasing from the initial score of 0.060 to 0.372 (CI: 0.355–0.436). It has successfully found non-myopic reward functions that induce jumping behavior—a more optimal height strategy than the local fitness of standing tall..

Of paper review domain, DGM-H improved the performance of the test set from 0.0 to 0.710 (CI: 0.590–0.750)it exceeds the fixed base. Go beyond superficial behavioral instructions to create multi-stage test pipelines with clear checklists and decision rules..

Transferring ‘Empowerment‘

An important finding for AI researchers is that these meta-level improvements are general and transferable. To measure this, the research team introduced i imp@k (imp@k) metric, which measures the performance gain achieved by a fixed meta-agent above k steps to change.

Hyperagents have been developed in paper review and robotics tasks have been transferred to Olympiad level math grade background. While the meta-agents from the customized DGM performance failed to make improvements in this new setting (imp@50 = 0.0), the transferred DGM-H hyperagents achieved i imp@50 of 0.630. This indicates that the system has independently acquired transferable self-improvement strategies.

Emergency Infrastructure: Tracking and Memory

Without clear instructions, hyperagents have developed sophisticated engineering tools to support their growth:

- Performance tracking: They introduced classes to log metrics across generations, identifying which changes led to sustained gains versus regressions.

- Continuous Memory: They used time-stamped storage of aggregated data and causal hypotheses, allowing later generations to build on earlier findings.

- Compute-Aware Planning: They brainstorm to adjust maintenance strategies based on the remaining testing budget—prioritizing important architectural changes early and general improvements later.

Key Takeaways

- Integration of Task and Meta Agents: Hyperagents end the ‘infinite regression’ of meta-levels by combining work agent (problem-solving) and a meta agent (system development) into a single, self-directed system.

- Metacognitive Self-Modification: Unlike previous systems with a fixed evolutionary logic, DGM-H can program its own ‘development process’, essentially rewriting the rules of how it produces better versions of itself.

- Domain-Agnostic Measurement: By removing the requirement for domain-specific alignment (previously limited mainly to coding), Hyperagents demonstrate self-improvement that works across any physical task, including robotics reward design again academic paper review.

- Transferable ‘Learning’ Skills: Meta-level development is generally possible; a hyperagent that learns to optimize robotic rewards can transfer those optimization techniques to accelerate performance in a completely different domain, such as Olympiad level math grade.

- Infrastructure for budding engineers: In their pursuit of better performance, hyperagents automatically develop advanced engineering tools—such as persistent memory, performance trackingagain computer literacy programming-without clear human instructions.

Check it out Paper again Repo. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.