Paged Attention to Major Language Models LLMs

When using LLMs at scale, the real limitation is GPU memory rather than computation, mainly because each application needs a KV cache to store token-level data. In a typical setup, a large fixed memory block is reserved for each request based on the maximum sequence length, resulting in significant unused space and consistency limits. Paged Attention improves this by breaking the KV cache into small, flexible parts that are only served when needed, similar to how physical memory works. It also allows multiple requests with the same initial command to share memory and repeat it only when the output starts to differ. This approach greatly improves memory efficiency, allowing very high throughput with very little overhead.

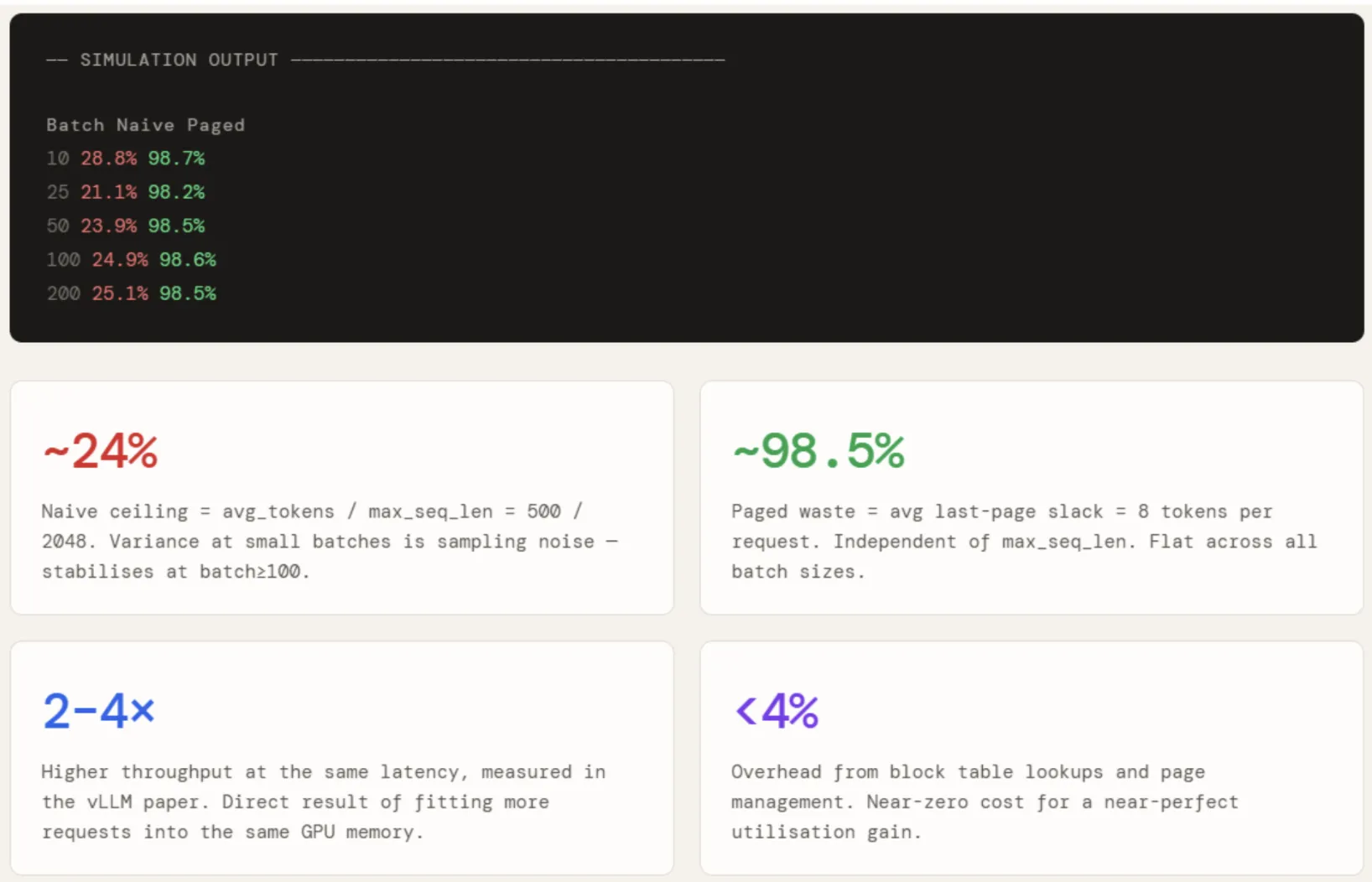

In this article, we simulate a naive KV cache, build an efficient implementation of Paged Attention with a block table and shared Copy-Write prefix, and measure the utilization gap across batch sizes of 10 to 200 concurrent requests.

import math

import random

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.patches as mpatches

from collections import defaultdict

random.seed(42)

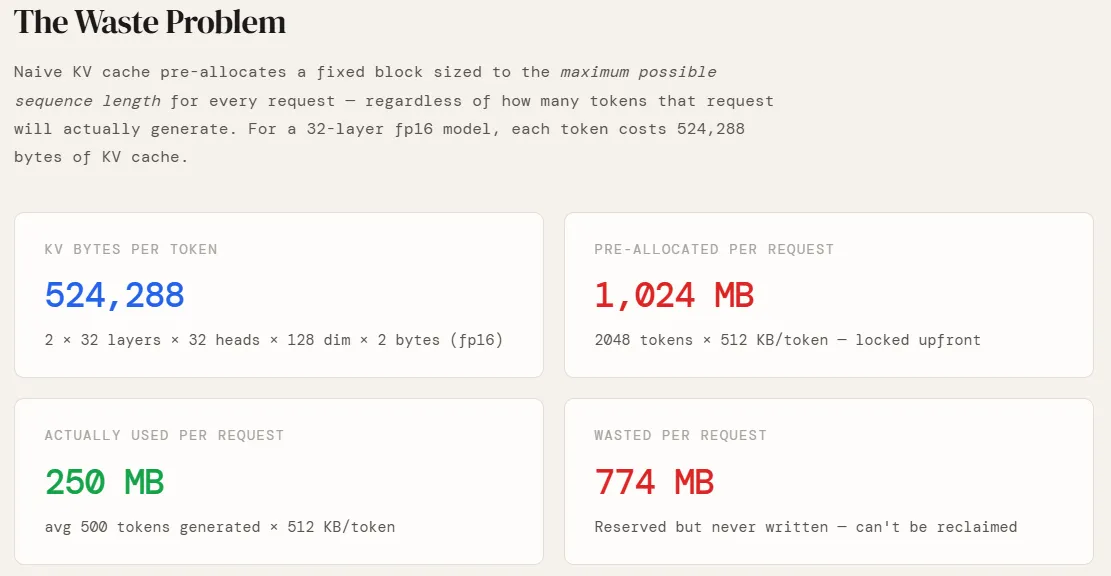

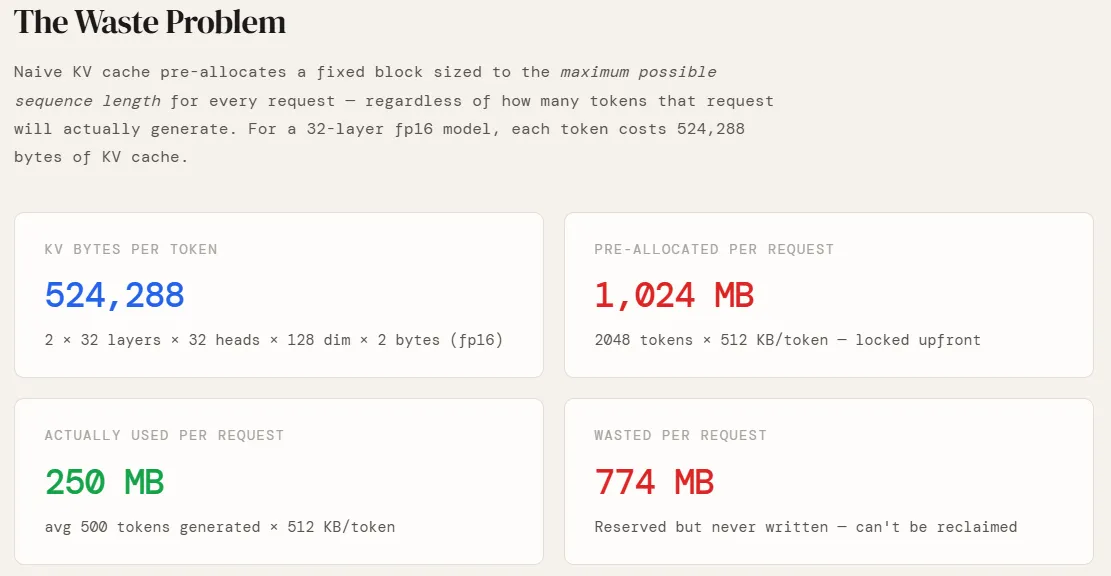

np.random.seed(42)Before simulating anything, we need to know how much GPU memory one token actually costs. This depends entirely on the design of the model. We’re using a GPT-style configuration — 32 layers, 32 focus heads, 128 measurements per head, stored at fp16. 2 factor in front accounts for both key and Value guesses (no Q cache — queries are recalculated at each step). Multiplying this gives us 524,288 bytes, or 512 KB, per token. This is the basic unit on which everything else is built – pre-allocation sizes, page counts, and wasted memory all scale directly to this number.

NUM_LAYERS = 32

NUM_HEADS = 32

HEAD_DIM = 128

BYTES_FP16 = 2

PAGE_SIZE = 16 # tokens per page (vLLM default)

MAX_SEQ_LEN = 2048

KV_BYTES_PER_TOKEN = 2 * NUM_LAYERS * NUM_HEADS * HEAD_DIM * BYTES_FP16

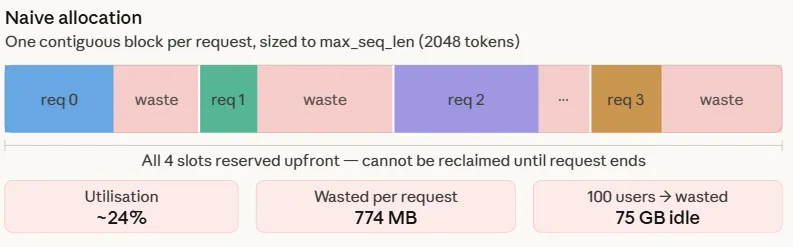

KV_MB_PER_TOKEN = KV_BYTES_PER_TOKEN / 1024 / 1024The foolproof method is simple: when a request arrives, a contiguous block of GPU memory is allocated the size of the maximum sequence length – 2048 tokens in this case. This happens because the length of the response is not known in advance, so the worst case is reserved.

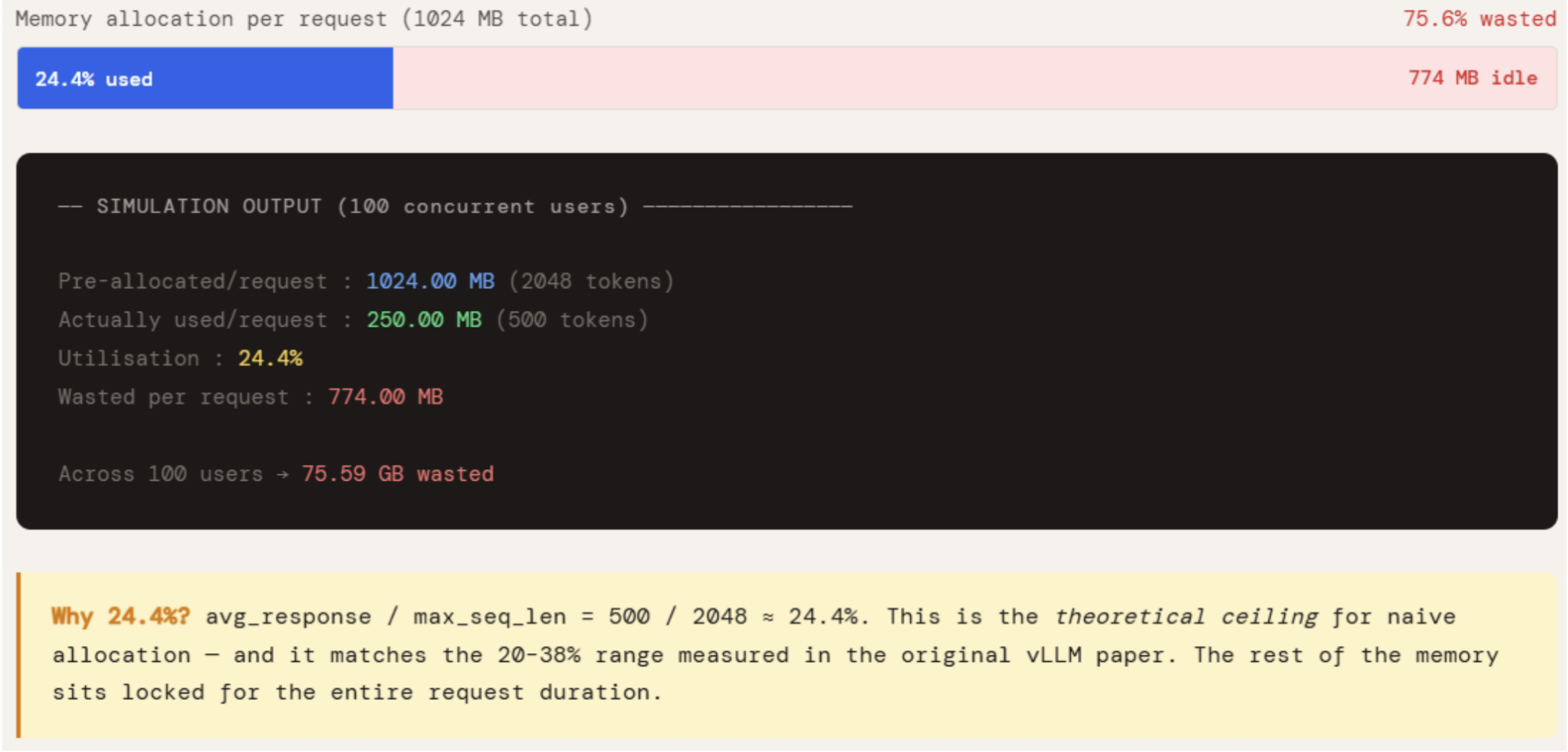

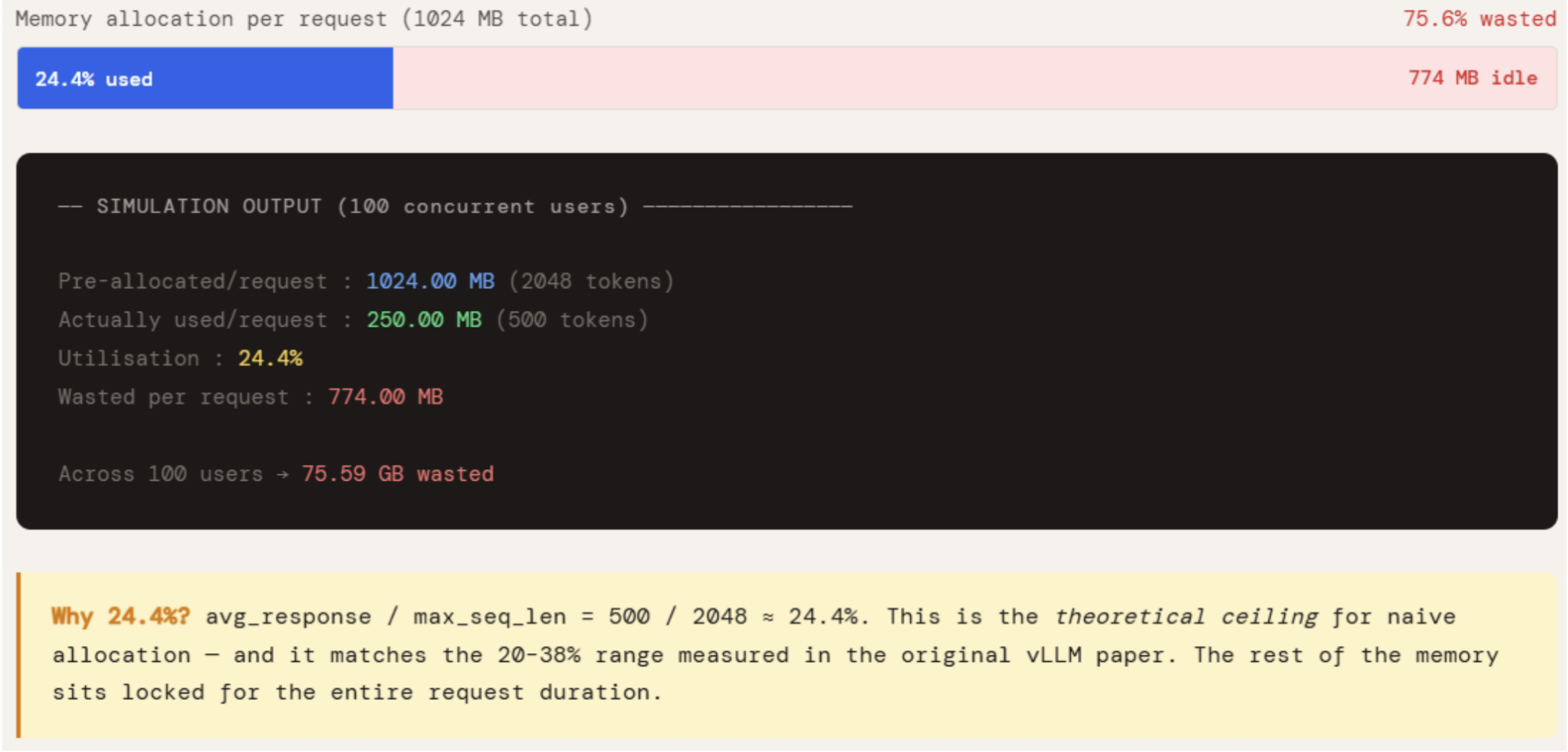

AVG_RESPONSE is set to 500, which is the real rate for a productive chatbot. Iterating over KV_MB_PER_TOKEN gives the write versus what was locked. A gap is a waste.

The numbers make the problem visible. Each request initially allocates 1024 MB but uses only 250 MB — 24.4% utilization. The remaining 774 MB remains reserved for the duration of the request, not available for any other request. For 100 simultaneous users, i.e. 75 GB of GPU memory does nothing. This is not an edge case – it’s the default behavior of all systems that don’t use page allocation, and that’s why non-optimized applications hit the OOM wall long before the GPU is computationally saturated.

print("=" * 60)

print("SECTION 1 -- Naive KV Cache: The Waste Problem")

print("=" * 60)

AVG_RESPONSE = 500 # realistic average tokens generated

pre_allocated_mb = MAX_SEQ_LEN * KV_MB_PER_TOKEN

actually_used_mb = AVG_RESPONSE * KV_MB_PER_TOKEN

print(f"nKV cache per token : {KV_BYTES_PER_TOKEN:,} bytes")

print(f"Pre-allocated/request : {pre_allocated_mb:.2f} MB ({MAX_SEQ_LEN} tokens)")

print(f"Actually used/request : {actually_used_mb:.2f} MB ({AVG_RESPONSE} tokens)")

print(f"Utilisation : {actually_used_mb / pre_allocated_mb * 100:.1f}%")

print(f"Wasted per request : {pre_allocated_mb - actually_used_mb:.2f} MB")

NUM_USERS = 100

wasted_gb = (pre_allocated_mb - actually_used_mb) * NUM_USERS / 1024

print(f"nAcross {NUM_USERS} concurrent users → {wasted_gb:.2f} GB wasted")

print("n→ Naive systems utilise only 20-38% of allocated KV cache memory")

print(" (source: original Paged Attention / vLLM paper)")

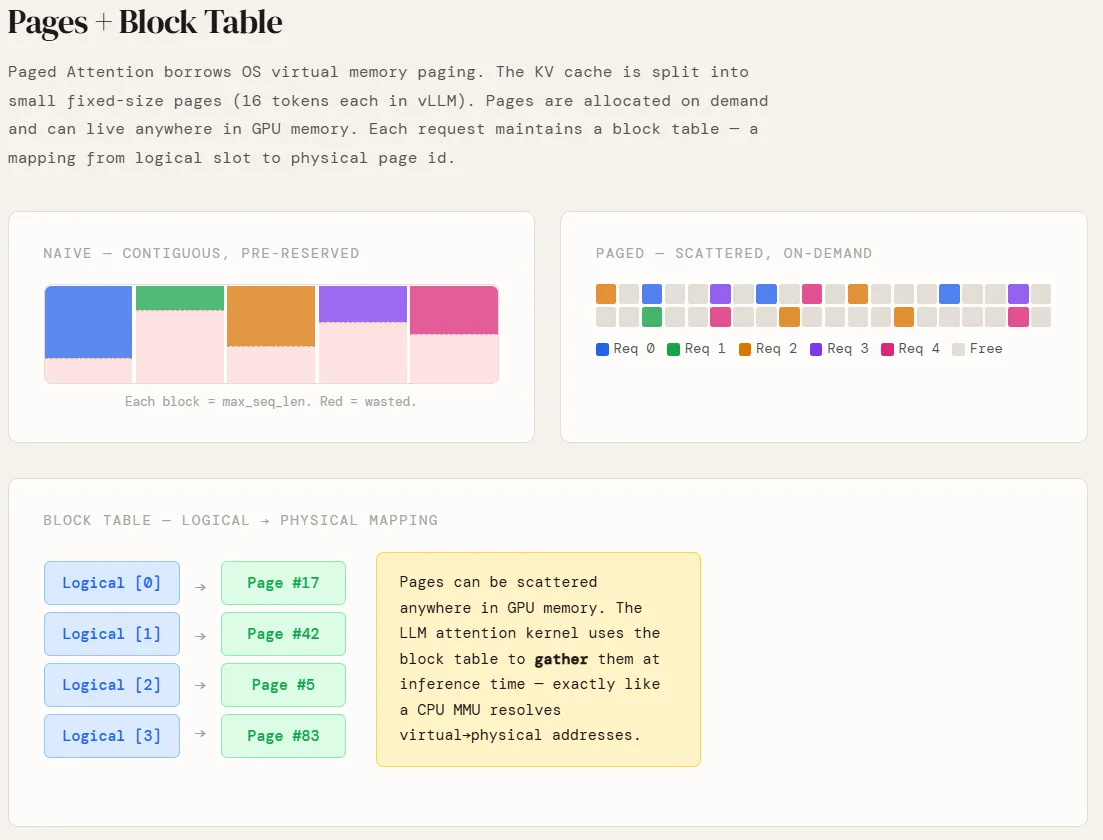

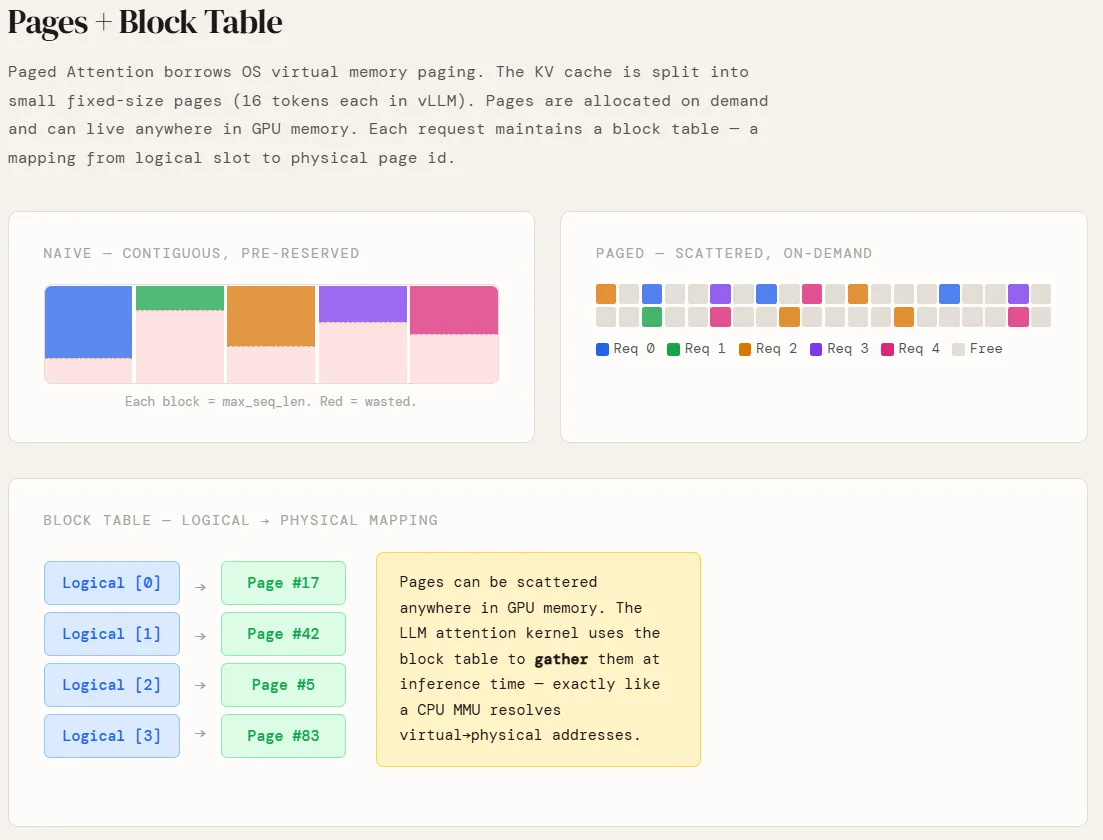

Two classes are introduced here to simulate how Paged Attention actually works at the memory management level.

A PagePool represents the actual GPU memory – a flat array of equal-sized pages, each holding 16 tokens. It maintains a free list and referral rate for each page. If the page reference value drops to zero, it is immediately returned to the free list and available for any new request. This is the main difference from random allocation — there are no slots reserved, no fragmentation, and no memory tied to the completed request.

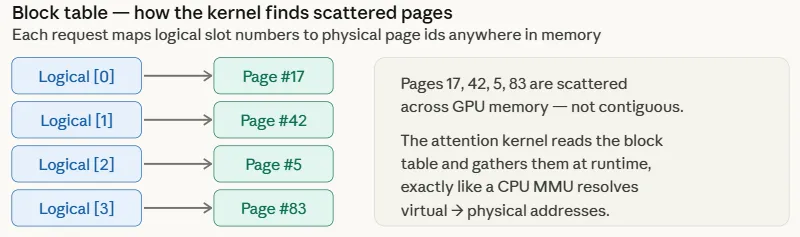

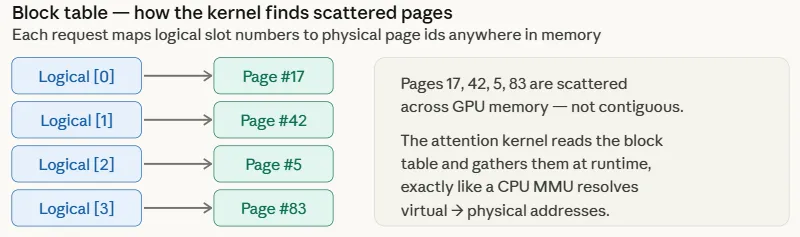

A PagedRequest represents a single request for consideration. It holds a block_table — an array that lists logical page pointers to physical page ids in the pool. Every time generator_token() is called and the number of tokens crosses the page boundary, a new virtual page is fetched from the pool. No memory is touched before it is needed.

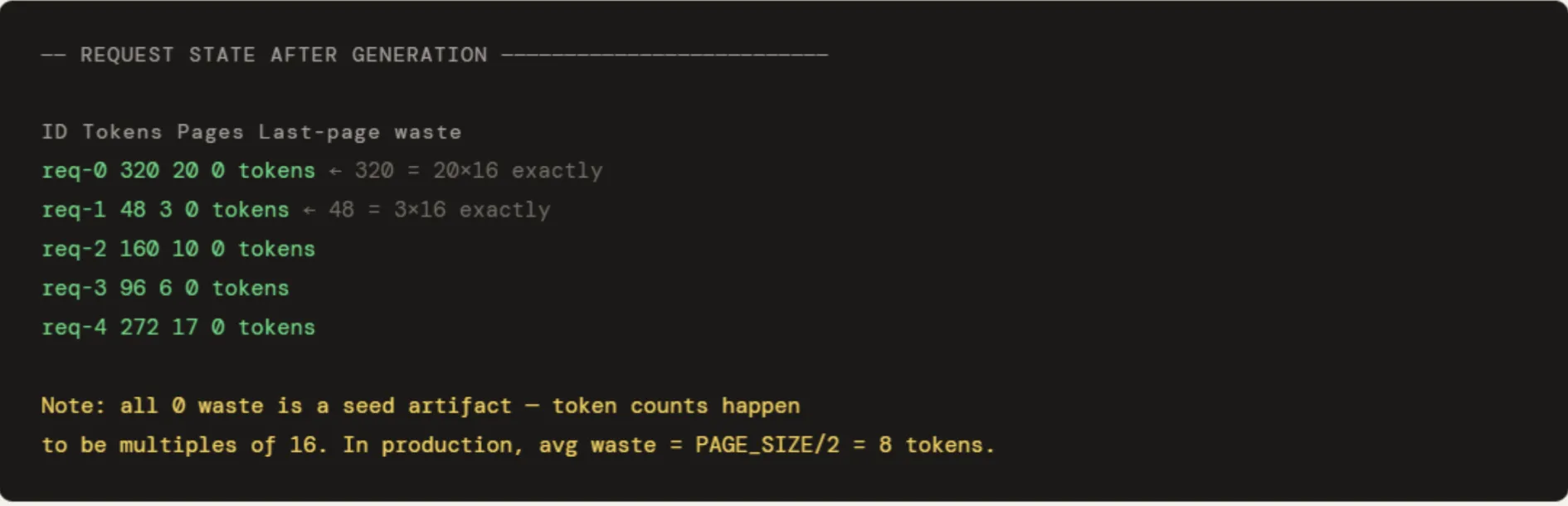

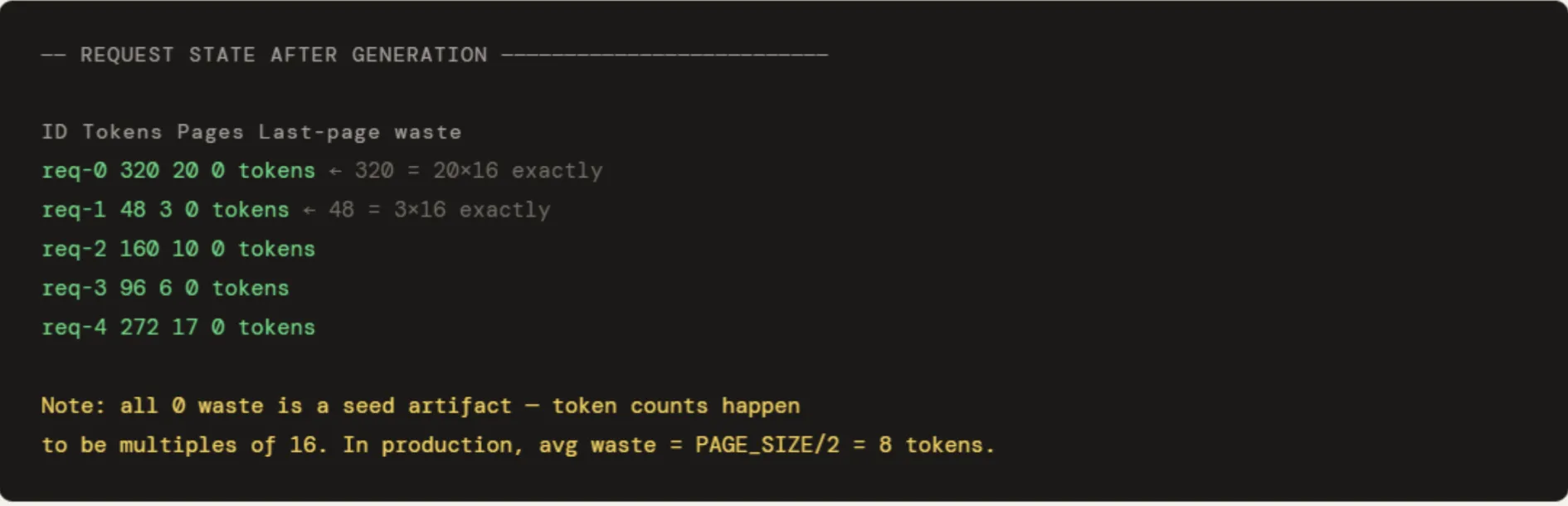

Five applications are run with a count of 320, 48, 160, 96, and 272 tokens. The output shows pages allocated in proportion to actual usage – req-1 with 48 tokens gets 3 pages, req-0 with 320 tokens gets 20. The pool utilization at 10.9% looks low only because 512 pages are provided for 5 small requests – in a fully loaded production environment it will stay close to the 98% range seen in section 4. “0 wasted tokens” in the last page column is a seed artifact – all five token counts occur as 16 standard iterations of the last page. 2 = 8 tokens per request.

print("n" + "=" * 60)

print("SECTION 2 -- Paged Attention: Pages + Block Table")

print("=" * 60)

"""

Instead of one large contiguous block per request:

- KV cache is split into fixed-size pages (PAGE_SIZE tokens each)

- Pages are allocated on demand, can live anywhere in GPU memory

- Each request keeps a block_table: logical index → physical page id

"""

class PagePool:

def __init__(self, total_pages):

self.free = list(range(total_pages))

self.total = total_pages

self.ref_count = defaultdict(int)

def allocate(self):

if not self.free:

raise MemoryError("OOM -- no free pages")

pid = self.free.pop(0)

self.ref_count[pid] = 1

return pid

def release(self, pid):

self.ref_count[pid] -= 1

if self.ref_count[pid] <= 0:

self.free.append(pid)

del self.ref_count[pid]

def share(self, pid):

"""Increment ref count -- another request is sharing this page."""

self.ref_count[pid] += 1

def cow_copy(self, pid):

"""CoW: allocate a new page, decrement ref on the old one."""

new_pid = self.allocate()

self.release(pid)

return new_pid

@property

def utilisation(self):

return (self.total - len(self.free)) / self.total * 100

class PagedRequest:

def __init__(self, req_id, pool: PagePool):

self.id = req_id

self.pool = pool

self.block_table = [] # logical index → physical page id

self.tokens = 0

def generate_token(self):

if self.tokens % PAGE_SIZE == 0: # page boundary → allocate new page

self.block_table.append(self.pool.allocate())

self.tokens += 1

def free(self):

for pid in self.block_table:

self.pool.release(pid)

self.block_table.clear()

pool = PagePool(total_pages=512)

requests = [PagedRequest(f"req-{i}", pool) for i in range(5)]

token_counts = [320, 48, 160, 96, 272]

for req, n in zip(requests, token_counts):

for _ in range(n):

req.generate_token()

print("nRequest state after generation:")

print(f" {'ID':<10} {'Tokens':>8} {'Pages':>7} {'Last-page waste':>16}")

for req in requests:

waste = req.tokens % PAGE_SIZE

waste = PAGE_SIZE - waste if waste else 0

print(f" {req.id:<10} {req.tokens:>8} {len(req.block_table):>7} {waste:>16} tokens")

print(f"nPool utilisation : {pool.utilisation:.1f}%")

requests[1].free()

print(f"After freeing req-1 → utilisation: {pool.utilisation:.1f}% (pages immediately reusable)")

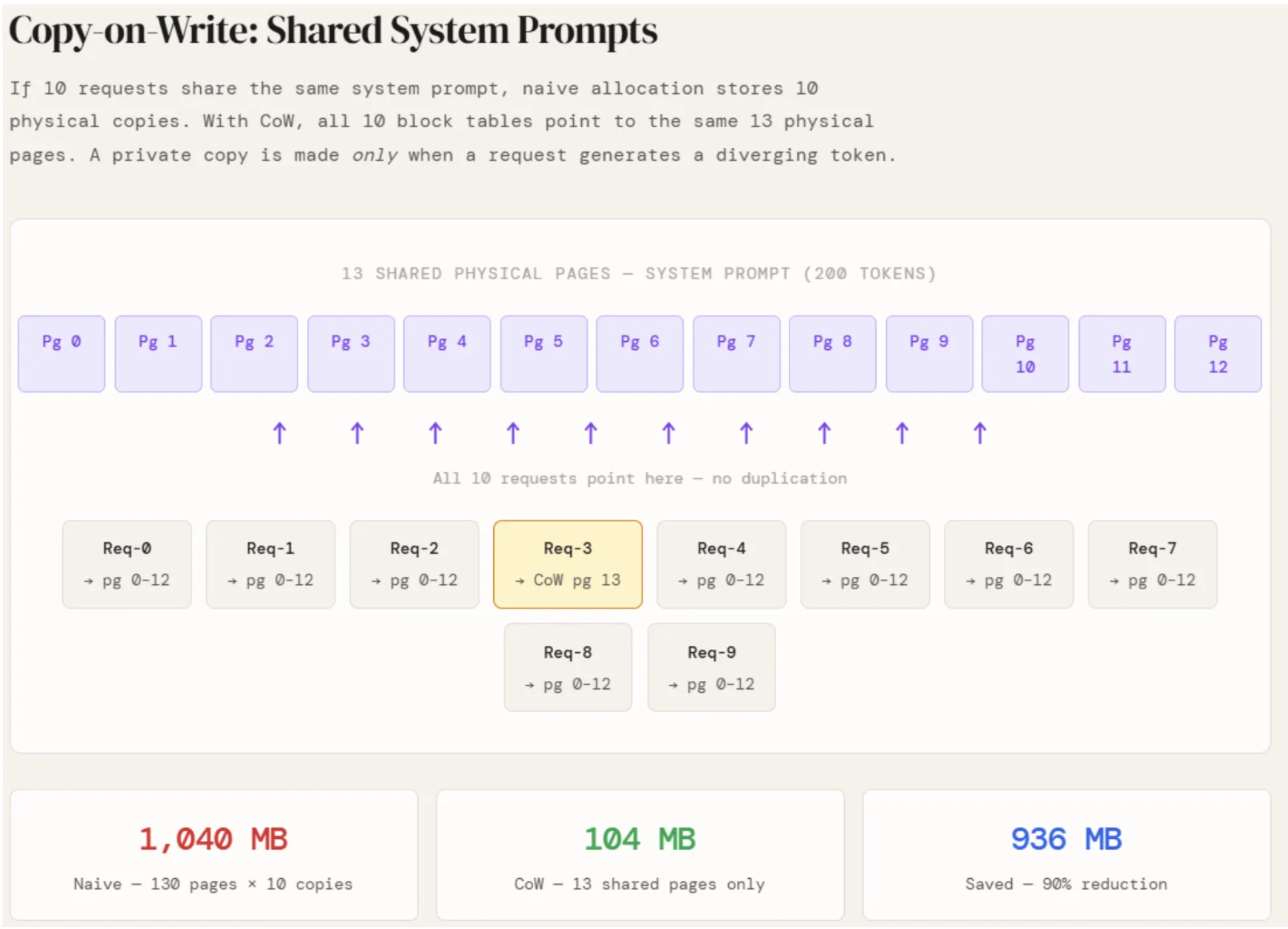

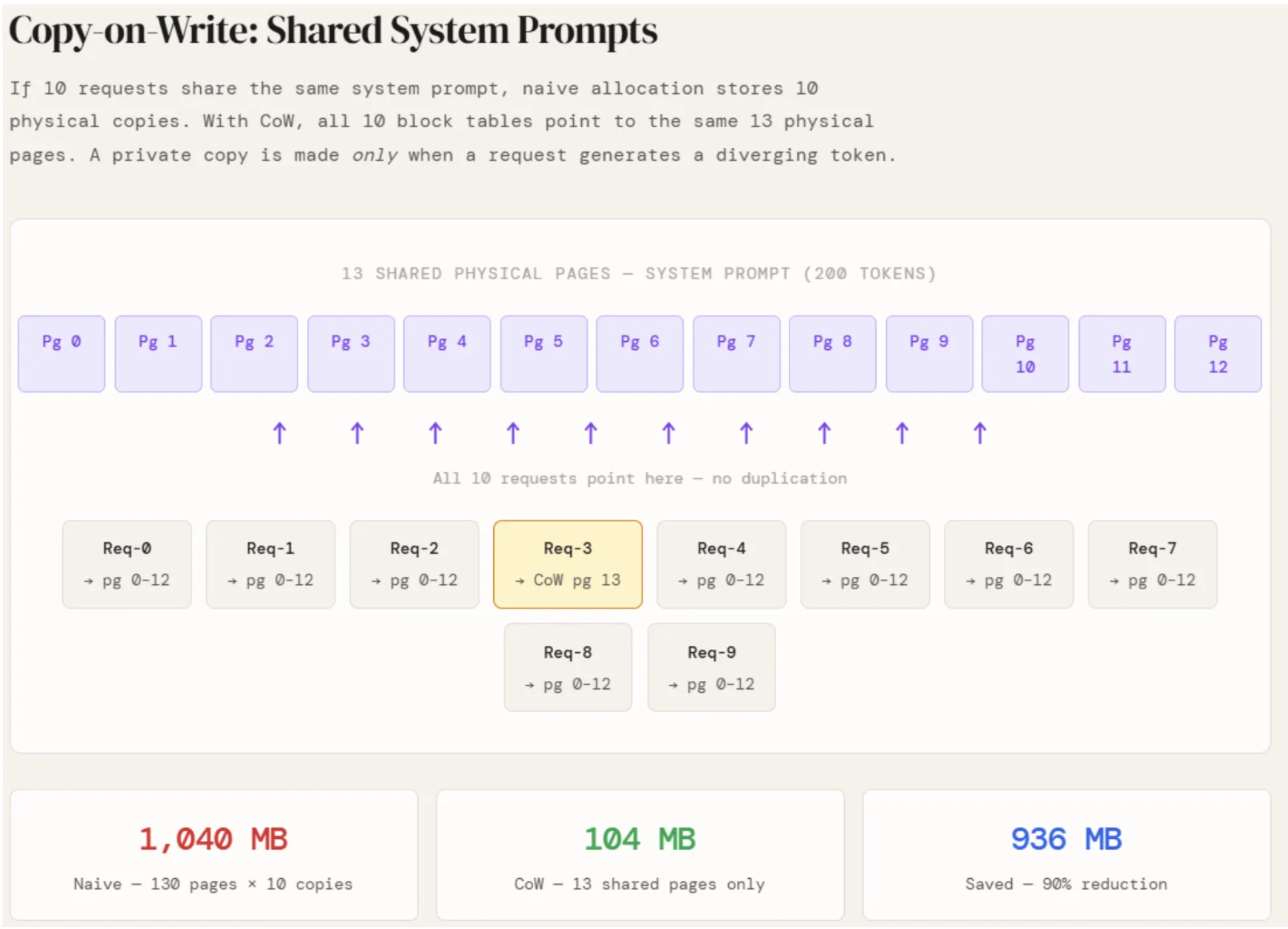

In production, almost every application to LLM used has the same system information – instructions that describe the behavior of the model. Under the empty share, each of those applications keeps its own full KV cache of system information. With 10 concurrent requests and 200 system tokens, that’s 10 identical copies of the same data residing in separate memory regions.

The same PagePool from Section 2 is reused here, expanded in two ways: share() increments the page’s ref count without allocating anything new, and cow_copy() allocates a new page and decrements the ref count of the original one. The new pool is consolidated and the system information is coded in 13 pages — math.ceil(200/16). Each of the 10 user requests then calls share() on all 13 pages, pointing their block tables to the same physical memory. No new pages are provided. The reference count for each shared page just goes up to 11.

Savings are fast: Allocation of nonsense can require 130 pages for every 10 requests. For CoW, only 13 pages are available. That’s 936 MB saved in one shared startup.

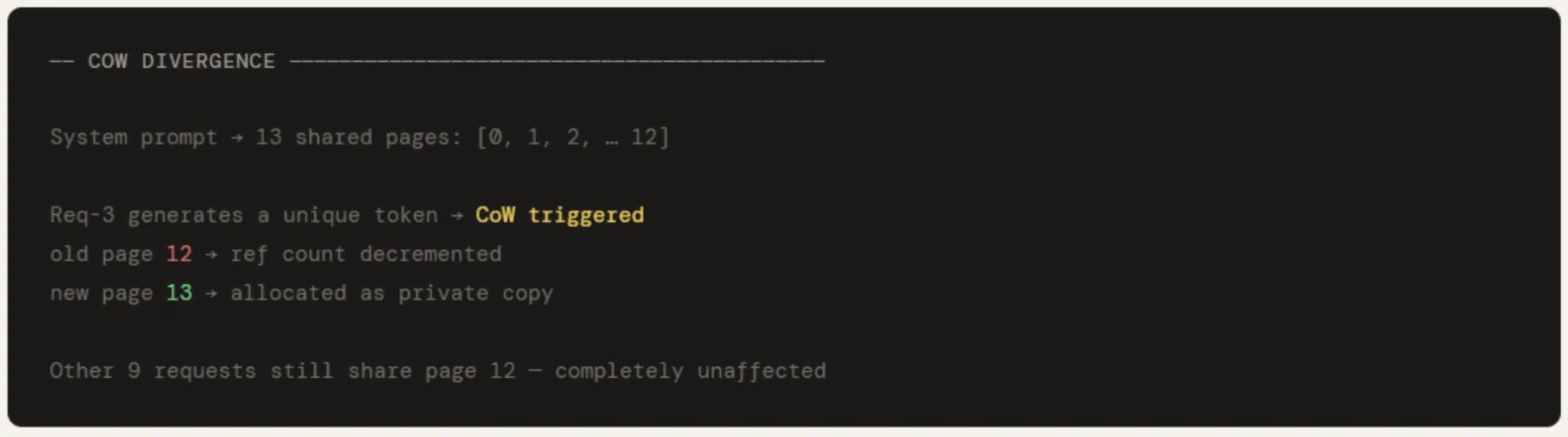

When req-3 generates its first unique token, cow_copy() is called on its last shared page — page 12. The new page 13 is allocated as a private copy of req-3, and the ref count on page 12 is decremented by one. The other 9 requests continue to point to page 12, untouched at all. This is the CoW contract: shared until separation, confidential only when necessary.

print("n" + "=" * 60)

print("SECTION 3 -- Copy-on-Write: Shared System Prompts")

print("=" * 60)

"""

If N requests share a system prompt, naive allocation stores N copies.

With CoW, all requests point to the SAME physical pages.

A private copy is made only when a request writes a diverging token.

"""

cow_pool = PagePool(total_pages=512)

SYSTEM_TOKENS = 200

system_pages = math.ceil(SYSTEM_TOKENS / PAGE_SIZE)

shared_pids = [cow_pool.allocate() for _ in range(system_pages)]

print(f"nSystem prompt → {system_pages} shared pages: {shared_pids}")

N = 10

user_tables = []

for i in range(N):

table = list(shared_pids)

for pid in shared_pids:

cow_pool.share(pid) # ref count up -- no physical copy

user_tables.append(table)

saved_mb = (system_pages * N - system_pages) * PAGE_SIZE * KV_MB_PER_TOKEN

print(f"nStoring system prompt for {N} requests:")

print(f" Naive : {system_pages * N} pages ({system_pages * N * PAGE_SIZE * KV_MB_PER_TOKEN:.1f} MB)")

print(f" CoW : {system_pages} pages ({system_pages * PAGE_SIZE * KV_MB_PER_TOKEN:.1f} MB)")

print(f" Saved : {saved_mb:.1f} MB")

old_pid = user_tables[3][-1]

new_pid = cow_pool.cow_copy(old_pid)

user_tables[3][-1] = new_pid

print(f"nReq-3 diverges → CoW: old page {old_pid} → new page {new_pid}")

print(f"All other {N-1} requests still share page {old_pid} unaffected")

Two functions are defined to measure the utility under each method for different cluster sizes.

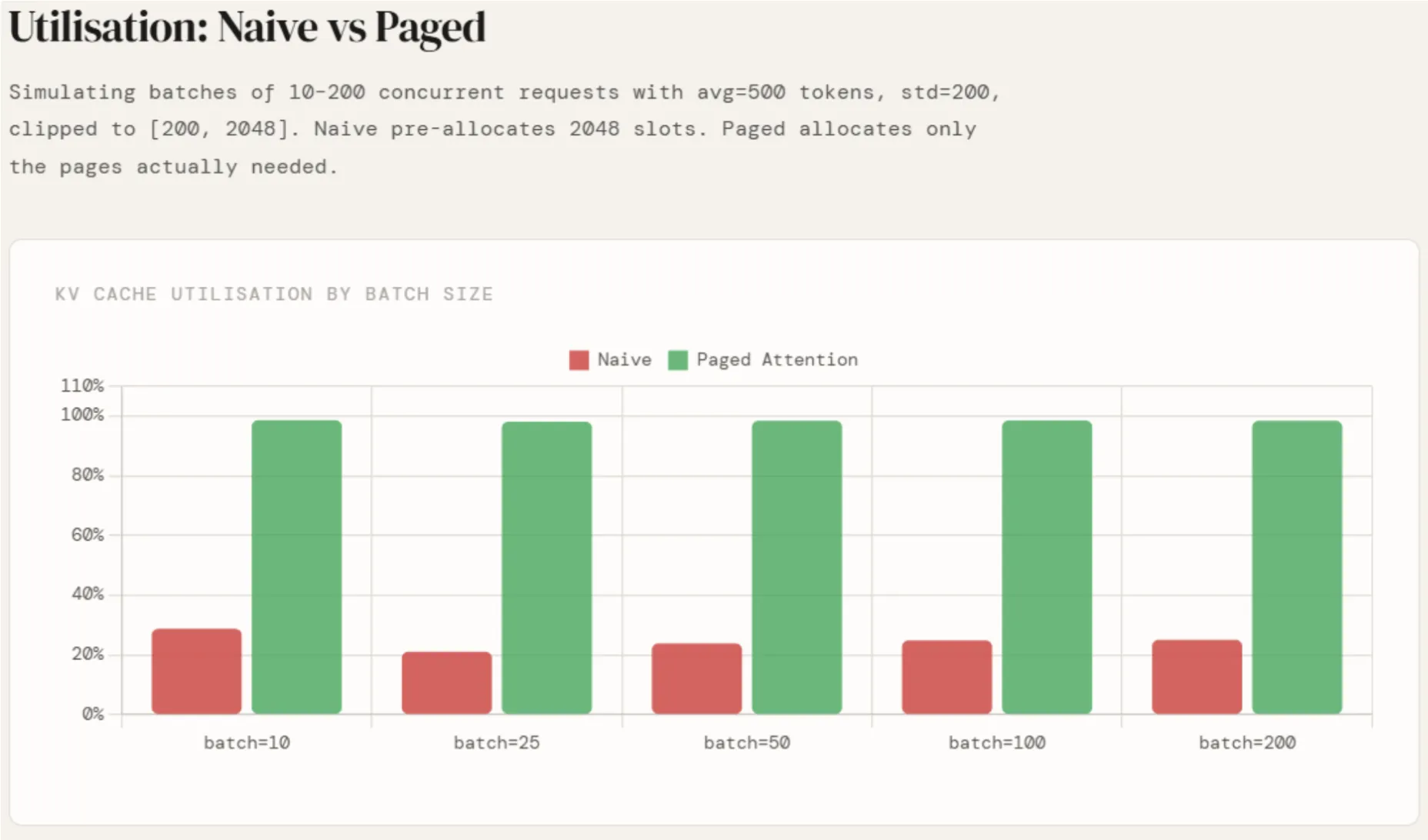

naive_utilization draws the token count from a normal distribution with avg=500 and std=200, fitted to [200, 2048]. This shows a realistic production distribution – most answers fall between 200 and 800 tokens, sometimes longer. For each request, a full 2048 slot block is preallocated regardless. The usage is real_tokens_sum / (2048 × n) — the ratio of what was written to what was reserved.

pageged_utilization takes the same actual token count but calculates how many pages each request would require – ceiling(tokens / 16). The only waste is the unfilled tail of the last page of each request, which is worth 8 tokens. Usage is real_tokens_sum / (allocated_pages × 16).

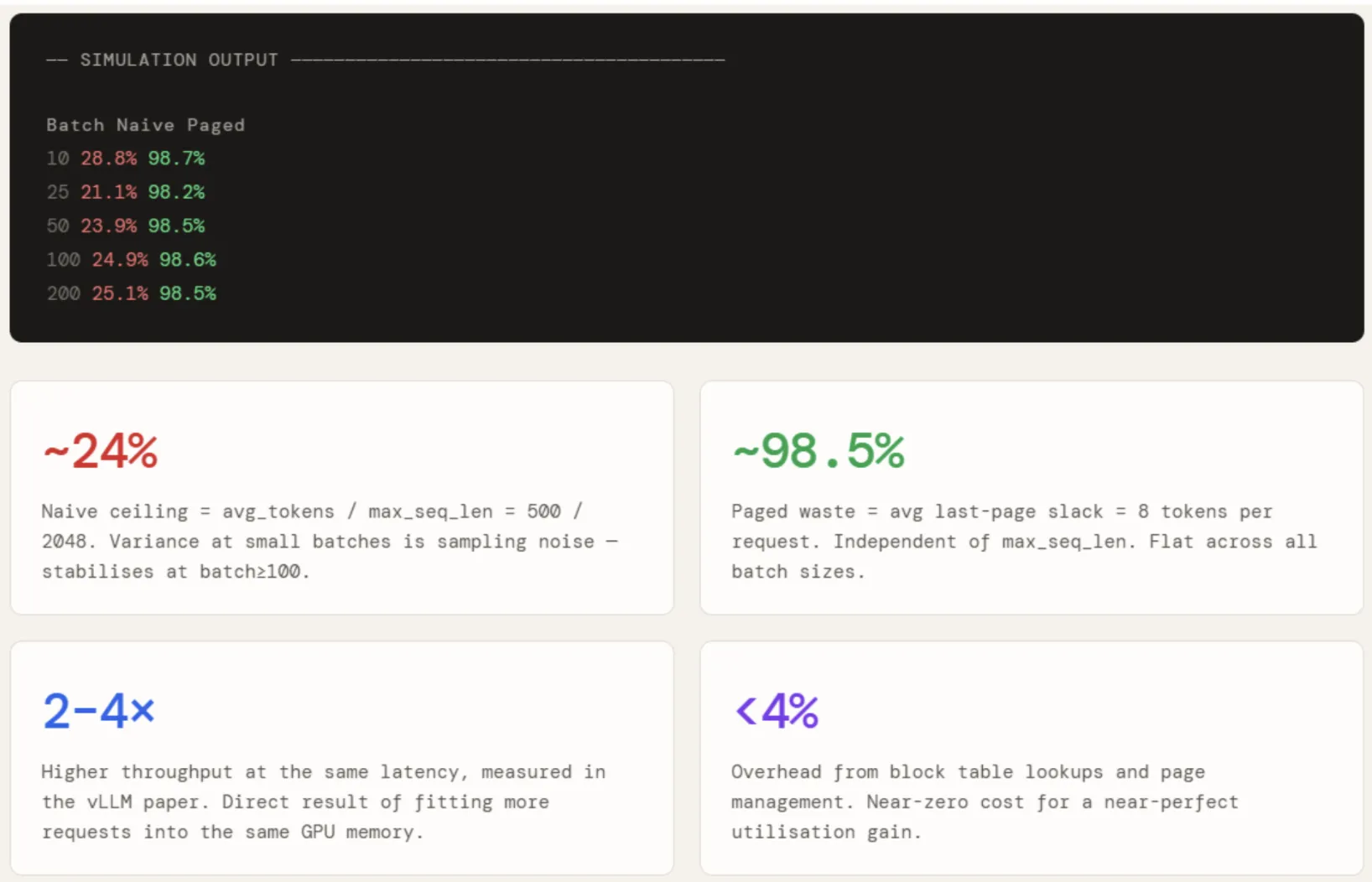

Results are applied to all batch sizes of 10, 25, 50, 100, and 200. Non-specific usage is around 24% for all batch sizes – with some variation in small batches due to sample noise – which is exactly avg / max_seq = 500 / 2048. It does not improve with the scale because the situation does not improve.

Page utilization remains flat at ~98.5% regardless of cluster size, because the waste per request is bound to one partial page and is not at all proportional to max_seq_len. The gap between these two numbers – about 74 points – is what allows vLLM to fit 2-4x the same requests in the same GPU memory.

print("n" + "=" * 60)

print("SECTION 4 -- Utilisation: Naive vs Paged")

print("=" * 60)

def naive_utilisation(n, max_seq=2048, avg=500, std=200):

actual = np.clip(np.random.normal(avg, std, n).astype(int), 200, max_seq)

return actual.sum() / (max_seq * n) * 100, actual

def paged_utilisation(actual_tokens, page_size=PAGE_SIZE):

pages = np.ceil(actual_tokens / page_size).astype(int)

return actual_tokens.sum() / (pages * page_size).sum() * 100

batch_sizes = [10, 25, 50, 100, 200]

naive_u, paged_u = [], []

print(f"n {'Batch':>6} {'Naive':>8} {'Paged':>8}")

for bs in batch_sizes:

nu, actual = naive_utilisation(bs)

pu = paged_utilisation(actual)

naive_u.append(nu)

paged_u.append(pu)

print(f" {bs:>6} {nu:>7.1f}% {pu:>7.1f}%")

Check it out The complete Notebook is here. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

I am a Civil Engineering Graduate (2022) from Jamia Millia Islamia, New Delhi, and I am very interested in Data Science, especially Neural Networks and its application in various fields.