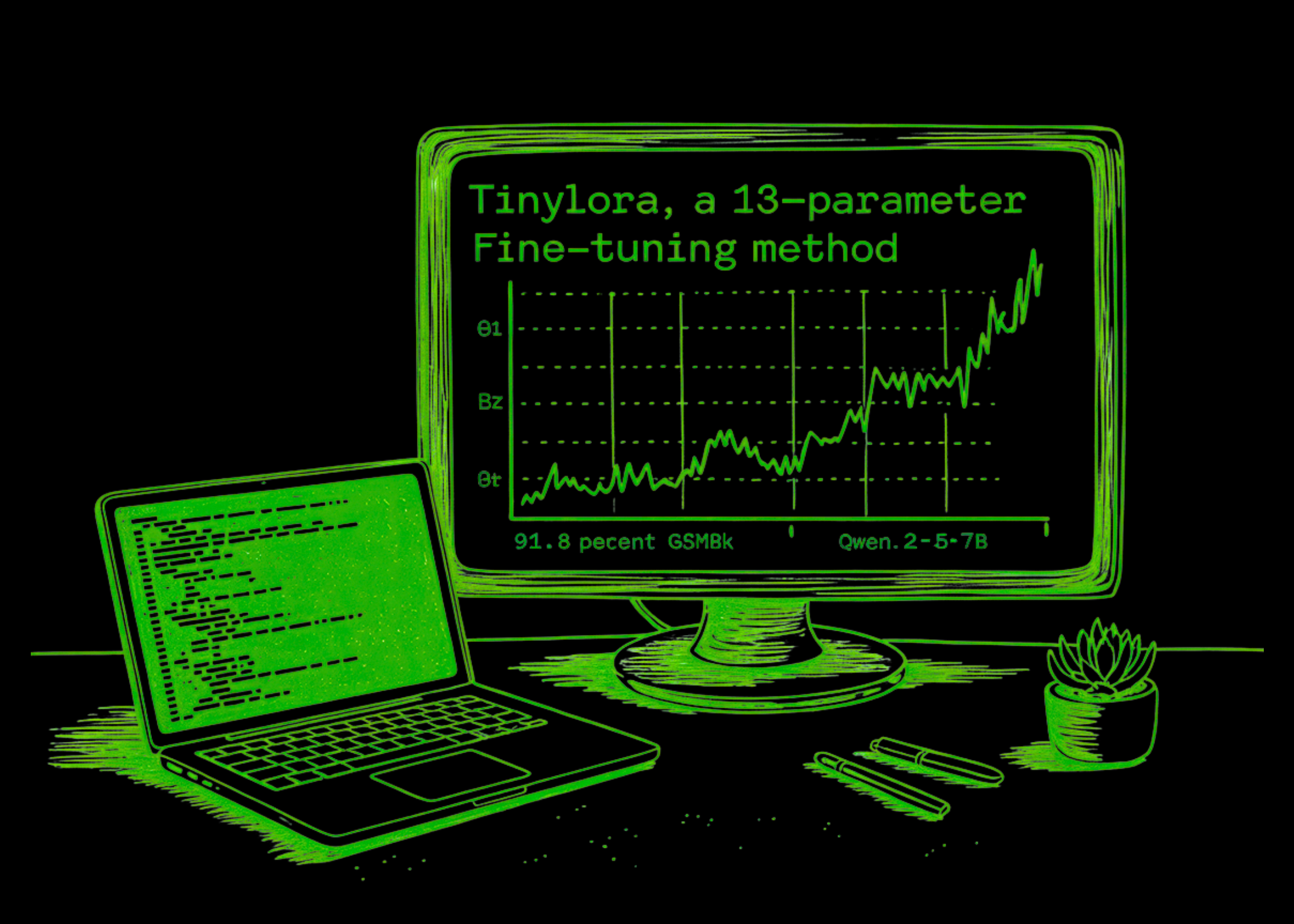

This AI Paper Introduces TinyLoRA, a 13-Parameter Fine-Tuning Method That Achieves 91.8 Percent of GSM8K on Qwen2.5-7B

Researchers from FAIR on the Meta, Cornell Universityagain Carnegie Mellon University showed that large-scale linguistic models (LLMs) can learn reasoning using a remarkably small number of trained parameters. The research team presents TinyLoRAa parameter that can be down to a single parameter that can be trained under extreme sharing settings. Applying this method to a Qwen2.5-7B-Order backbone, research team achieved 91.8% accuracy. only in GSM8K benchmark 13 parameterstotal of only 26 bytes in bf16.

Overcoming Limitations of Standard LoRA

Standard Low-Rank Adaptation (LoRA) adapts the frozen linear layer W ∈ Rdxk using a trainable matrix A ∈ Rdxr again B ∈ Rrxk. The number of trainable parameters in standard LoRA scales with the layer width and rank, leaving a non-trivial lower bound even at level 1. For a model like Llama3-8B, this minimum update size is approximately equal. 3 million parameters.

TinyLoRA bypasses this by building on it LoRA-XSusing the Truncated Singular Value Decomposition (SVD) of frozen weights. While LoRA-XS usually requires at least one parameter per transformed module, TinyLoRA replaces the trainable matrix with a low-dimensional trainable vector. 𝜐 ∈ Ru is aimed at a random constant tensor P ∈ Ruxrxr.

The review rule is defined as:

$$W’ = W + USigma(sum_{i=1}^{u}v_{i}P_{i})V^{top}$$

Using the weight bound factor (na tie), the number of parameters can be trained as O(nmu/na tie), which allows updates to boil down to a single parameter where all modules in all layers share the same vector.

Reinforcement Education: A Catalyst for Minor Updates

The core of the research findings is that Reinforcement Learning (RL) works much better than Supervised Finetuning (SFT) with very low parameter counts. The research team reports that models trained with SFT need to be updated 100 to 1,000 times larger to achieve the same performance as those trained with RL.

This gap is caused by the ‘information density’ of the training signal. SFT forces the model to absorb a lot of information—including stylistic noise and irrelevant properties of human displays—because it aims to treat all tokens as equally informative. In contrast, RL (especially Group Related Policy Development or GRPO) gives a small but clean signal. Because rewards are secondary (e.g., the exact match of a statistical response), reward-related features are correlated with the signal while irrelevant variance is canceled out by resampling.

Development Guidelines for Devs

The research team distinguished several strategies for increasing the effectiveness of small updates:

- Correct Frozen Level (r): The analysis showed that the level of SVD is frozen r=2 it was good. High levels introduce too many degrees of freedom, making it difficult to optimize a small trainable vector.

- Tiling vs. Systematic Sharing: The research team compared ‘structured’ sharing (modules of the same type of sharing parameters) to them ’tile‘ (adjacent modules share the same depth parameters). Surprisingly, tiling worked better, not showing the inherent advantage of forcing parameter sharing exclusively between special assumptions such as Query or Value modules.

- Accuracy: For slightly delayed systems, storing the parameters internally fp32 showed excellent bit-for-bit performance, even accounting for its relatively large footprint bf16 or fp16.

Benchmark Performance

The research team reports that Q-2.5 models are often needed around 10x less updated parameters than LLaMA-3 to achieve the same performance in their setup.

| Model | Qualified Parameters | GSM8K Pass@1 |

| Qwen2.5-7B-Yala (Basic) | 0 | 88.2% |

| Qwen2.5-7B-Order | 1 | 82.0% |

| Qwen2.5-7B-Order | 13 | 91.8% |

| Qwen2.5-7B-Order | 196 | 92.2% |

| Qwen2.5-7B-Instruct (Full FT) | ~ 7.6 billion | 91.7% |

For rigorous benchmarks like MATH500 again AIME24196 parameter updates for Qwen2.5-7B-Instruction saved 87% of the overall performance improvement of full resolution on six difficult math benchmarks.

Key Takeaways

- Extreme Parametric Performance: It is possible to train a Qwen2.5-7B-Order model to achieve 91.8% accuracy. in the GSM8K statistical benchmark using only 13 parameters (26 bytes in total).

- RL Advantage: Reinforcement Learning (RL) is more efficient than Supervised Finetuning (SFT) in low volume settings; SFT requires 100–1000x bigger reviews to achieve the same performance level as RL.

- TinyLoRA Framework: The research team has developed TinyLoRAa new parameter that uses weighting and random guesswork to rate low-quality adapters up to one parameter that can be trained.

- Preparing for a “Micro-Update”: In these small updates, fp32 to be exact works better than half-precision formats, too “tile” (sharing parameters through the depth of the model) is more than the sharing organized by module type.

- Measuring Trends: As models grow, they become more ‘programmable’ with fewer absolute parameters, suggesting that models are rated for billions can be tuned for complex operations using just a few bytes.

Check it out Paper. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.