Meet A-Evolve: PyTorch’s Time for Agent AI Systems That Replaces Manual Tuning with Automatic State Evolution and Self-Adaptation

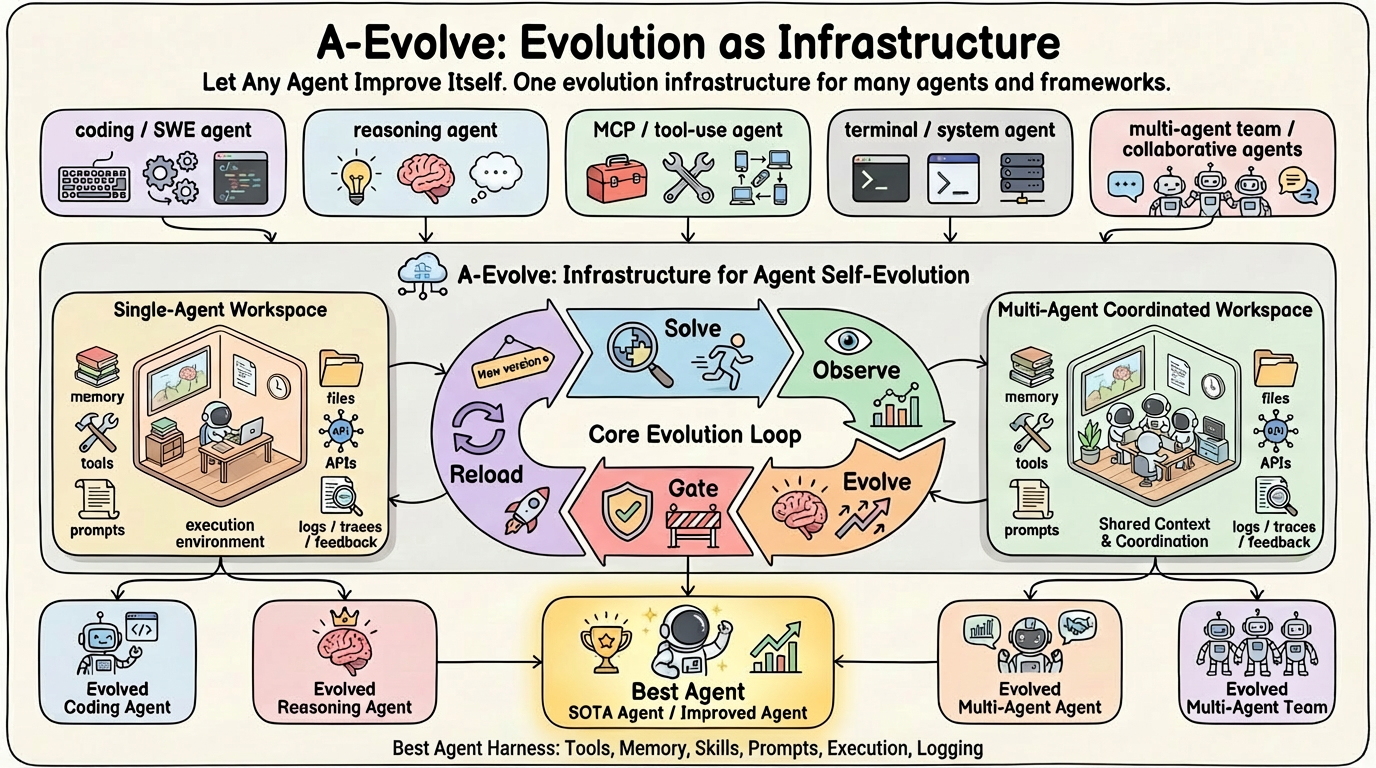

A research team associated with Amazon has released it Evolvea universal infrastructure designed to automate the development of autonomous AI agents. This framework aims to replace the ‘handmade wire engineering’ that currently describes agent development through a systematic, evolutionary process.

The project is described as a possible ‘PyTorch moment’ for agent AI. Just as PyTorch moved deep learning away from manual gradient computation, A-Evolve seeks to move agent design away from hand-tuned information and toward an agile framework where agents improve their code and logic in iterative cycles.

Problem: Manual Tuning Bottleneck

In current workflows, software and AI developers building autonomous agents often find themselves stuck in a trial-and-error dilemma. When an agent fails a job—like troubleshooting a GitHub issue SWE-bench—the developer must check the logs manually, identify logic failures, and rewrite the information or add a new tool.

Evolve is designed to automate this loop. The main premise of the framework is that an agent can be treated as a collection of dynamic artifacts that evolve based on systematic feedback from its environment. This can turn a basic ‘seed’ agent into a very effective one with ‘zero human intervention,‘ a goal achieved by transferring the tuning process to an automatic engine.

Architecture: Agent workspace and Manifest

Evolve introduces a standard directory called i Agent’s workspace. This workplace defines the ‘DNA’ of the agent through five key components:

manifest.yaml: A central configuration file that defines the agent’s metadata, access points, and performance parameters.prompts/: Systemic messages and pedagogical logic guiding LLM thinking.skills/: Reusable code snippets or different tasks that the agent can learn to perform.tools/: Configuration of external interfaces and APIs.memory/: Episodic data and historical context used to inform future actions.

I The Evolution Engine works directly on these files. Rather than simply modifying the command in memory, the engine modifies the actual code and configuration files within the workspace to improve performance.

The Five-Stage Evolution Loop

The accuracy of the framework lies in its internal logic, which follows a systematic loop of five stages to ensure that the development is effective and sustainable:

- Solve it: The agent tries to complete tasks within the target environment (BYOE).

- Be careful: The system generates structured logs and captures the benchmark response.

- Change yourself: The Transformation Engine analyzes observations to identify points of failure and repair files in the Agent Workspace.

- The gate: The system validates the new transformation against a set of qualification functions to ensure that it does not cause regression.

- Reload: The agent is restarted with the updated workspace, and the cycle begins again.

To ensure reproducibility, A-Evolve integrates with Git. All conversions are automatic git-tagged (eg evo-1, evo-2). If a modification fails the ‘gateway’ phase or shows poor performance in the next cycle, the system can automatically roll back to the last stable version.

‘Bring Your Own’ (BYO) Modularity

Evolve is designed as a modular framework rather than an agent-specific model. This allows AI experts to change components according to their specific needs:

- Bring Your Own Agent (BYOA): Support for any architecture, from basic ReAct loops to complex multi-agent systems.

- Bring Your Own Environment (BYOE): Compatible with different environments, including software engineering sandboxes or cloud-based CLI environments.

- Bring Your Own Algorithm (BYO-Algo): Flexibility to use different evolutionary techniques, such as LLM-driven transformation or Reinforcement Learning (RL).

Benchmark Performance

The A-EVO-Lab team tested the framework using the basic Claude series model on several robust benchmarks. The results show that automatic evolution can drive agents to a higher level of performance:

- MCP-Atlas: Reached 79.4% (#1)a +3.4pp increase. This benchmark specifically tests the capabilities of tools using the Model Context Protocol (MCP) across multiple servers.

- SWE Bench Certified: Achieved 76.8% (~#5)a +2.6pp get better at solving real-world software bugs.

- Terminal-Bench 2.0: Reached 76.5% (~#7)representing a +13.0pp the proliferation of command-line technologies within Dockerized environments.

- SkillsBench: Hit 34.9% (#2)a +15,2pp benefit from independent skill acquisition.

In the MCP-Atlas test, the system converted 20 standard lines of information with no initial skills into an agent with five targeted, newly written skills that allowed it to reach the top of the leaderboard.

Implementation

Evolve is designed to integrate with existing Python workflows. You provide a Base Agent. Evolve brings back the SOTA Agent. 3 lines of code. 0 hours of manual wiring engineering. One infra, any domain, any evolutionary algorithm. The following snippet shows how to start the evolution process:

import agent_evolve as ae

evolver = ae.Evolver(agent="./my_agent", benchmark="swe-verified")

results = evolver.run(cycles=10)Key Takeaways

- From Manual to Auto tuning: Evolve changes the development paradigm from ‘hand-binding engineering’ (hand-tuning instructions and tools) to an automated evolutionary process, allowing agents to self-develop logic and code.

- ‘Agent workstation’ level: The framework treats agents as a standard directory consisting of five main components—

manifest.yamlinformation, capabilities, tools, and memory—offers a clean, file-based interface The Evolution Engine to fix. - Closed-Loop Evolution with Git: Evolve uses a five-stage loop (Solve, Look, Turn, Gate, Reload) to ensure sustainable development. All change is git-tagged (eg

evo-1), which allows for full reproducibility and automatic rollback if conversion drops. - Agnostic ‘Bring Your Own’ Infrastructure: The framework is very modular, supportive It’s BYOA (Agent), BYOE (Nature), and BYO-Algo (Algorithm). This allows developers to apply any model or evolutionary strategy to any specific domain.

- Proven SOTA benefits: The infrastructure has already demonstrated State-of-the-Art performance, propelling agents to #1 in MCP-Atlas (79.4%) and high standards SWE Bench Certified (~#5) again Terminal-Bench 2.0 (~#7) with manual intervention.

Check it out Repo. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.