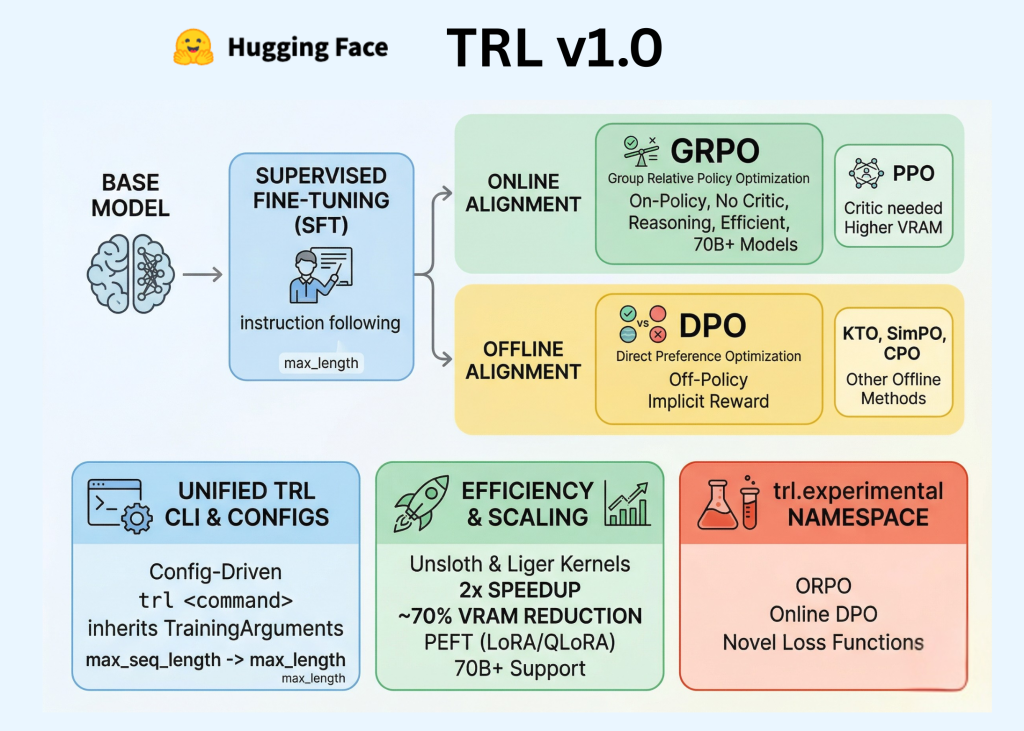

Hugging Face Releases TRL v1.0: Integrated Post-Training Stack for SFT, Reward Model, DPO, and GRPO Workflow

Hugging Face has officially been released TRL (Transformer Reinforcement Learning) v1.0marking a significant transition for the library from a research-oriented repository to a stable, production-ready framework. For AI experts and developers, this release includes code After Training pipeline—a key sequence of Supervised Fine-Tuning (SFT), Reward Modeling, and Alignment—in a unified, standardized API.

In the early stages of the LLM boom, postgraduate training was often considered the ‘dark art’ of assessment. TRL v1.0 aims to change that by providing a consistent developer experience built on three main pillars: commitment Command Line Interface (CLI)which is included The configuration systemand an extended program of alignment algorithms including The DPO, GRPOagain KTO.

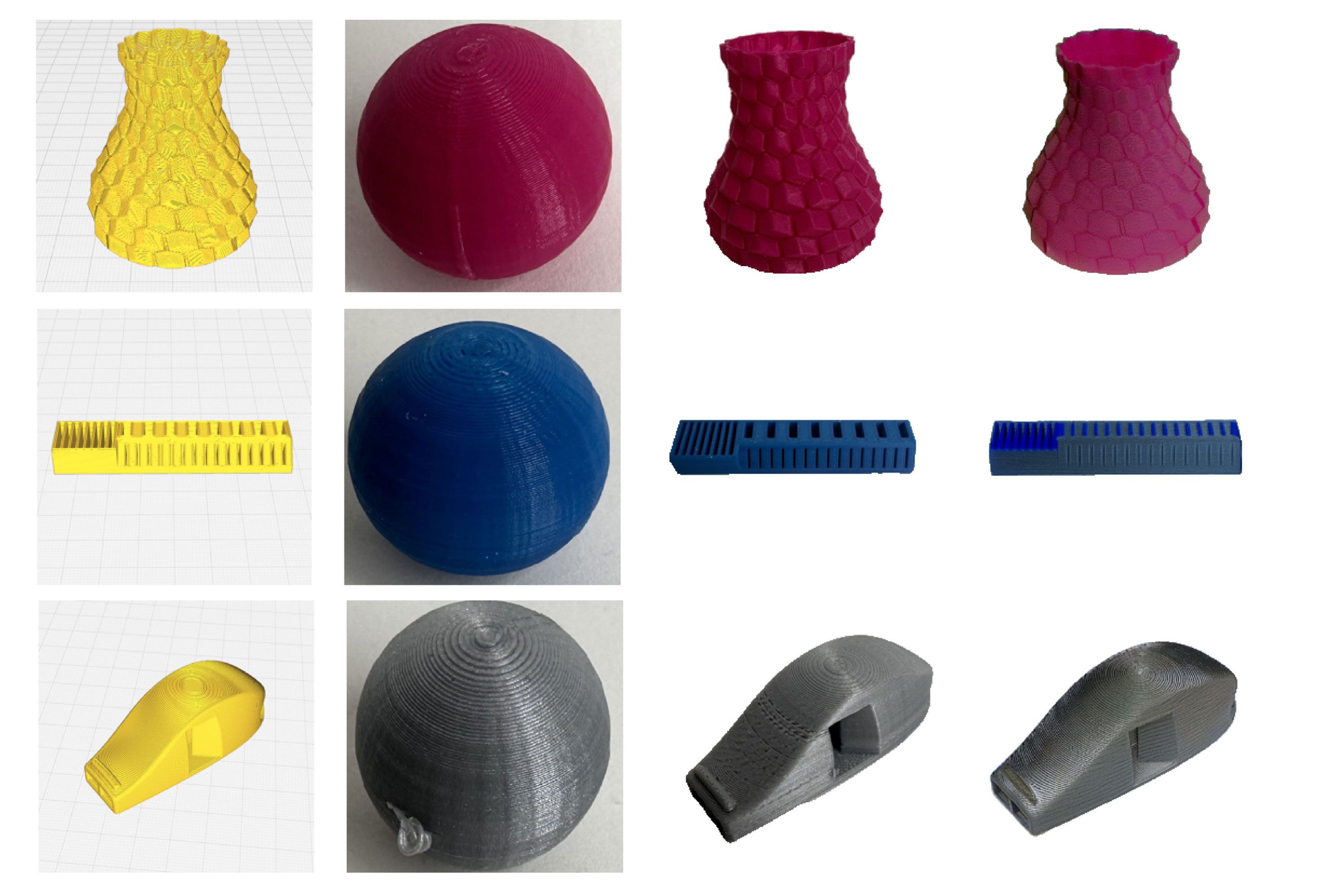

Composite Stack After Training

Post-training is the stage where the pre-trained basic model is refined to follow instructions, use a specific tone, or demonstrate complex thinking skills. TRL v1.0 organizes this process into separate, interactive stages:

- Supervised Fine-Tuning (SFT): The basic step in which a model is trained on high-level data is following instructions to adapt its pre-trained knowledge into a conversational format.

- Reward Modeling: The process of training a different model to predict a person’s preferences, acting as a ‘judge’ to find the answers of different models.

- Alignment (Reinforcement Learning): A final refinement where the model is improved to increase the preference score. This is achieved by “online” methods that generate text during training or “offline” methods that learn from static preference data sets.

Measuring the Developer Experience: TRL CLI

One of the most important updates for software developers is the introduction of robust TRL CLI. Previously, developers were required to write a lot of boilerplate code and custom training loops for every test. TRL v1.0 introduces a configuration-driven approach that uses YAML files or direct command-line arguments to manage the training lifecycle.

I trl Command

The CLI provides common entry points for the initial stages of training. For example, starting an SFT run can now be done with a single command:

trl sft --model_name_or_path meta-llama/Llama-3.1-8B --dataset_name openbmb/UltraInteract --output_dir ./sft_resultsThis link is linked to Hugging Face Accelerateswhich allows the same command to access different hardware configurations. Whether it runs on a single local GPU or a multi-node cluster is used Fully Shared Data Parallel (FSDP) or DeepSpeedCLI handles the basic distribution concept.

TRLConfig and TrainingArguments

Technical and core equivalence transformers library is the core of this release. Each trainer now has a corresponding configuration section—like this one SFTConfig, DPOConfigor GRPOConfig-direct inheritance transformers.TrainingArguments.

Alignment Algorithms: Choosing the Right Objective

TRL v1.0 includes several reinforcement learning methods, classifying them based on their data requirements and computational topic.

| The algorithm | Kind of | Technical aspect |

| PPO | On the Internet | It requires Policy, Indicator, Reward, and Value (Criticism) models. The highest amount of VRAM. |

| The DPO | Offline | It learns from preference pairs (chosen vs. rejected) without a different reward model. |

| GRPO | On the Internet | An approach to policy that removes the Value (Critic) model by using group-related rewards. |

| KTO | Offline | It learns from “up/down” signals instead of paired preferences. |

| ORPO (Exp.) | For testing | A one-step method that combines SFT and alignment using loss-of-weight ratio. |

Efficiency and performance measurement

Accommodating multi-billion-parameter models on consumer or mid-range enterprise hardware, TRL v1.0 includes technologies focused on efficiency:

- PEFT (Parameter-Efficient Fine-Tuning): Native support for LoRA again QLoRA it enables fine-tuning by updating a small part of the model mass, greatly reducing memory requirements.

- Unsloth compilation: TRL v1.0 uses special characters from Misbehavior the library. For SFT and DPO workflows, this integration can result in a 2x increase in training speed up to 70% reduction in memory usage compared to standard usage.

- Data packaging: I

SFTTrainersupports fixed height packaging. This technique combines many short sequences into a single block of fixed length (eg, 2048 tokens), ensuring that almost every token being processed contributes to the gradient update and reducing the computation used in padding.

I trl.experimental Namespace

The Hugging Face team presented the trl.experimental namespace to separate stable tools in production from research in development. This allows the core library to remain backward compatible while handling advanced development.

Features currently in the test track include:

- ORPO (Odds Ratio Preference Optimization): An emerging approach that attempts to bypass the SFT phase by applying alignment directly to the base model.

- Online DPO Trainers: DPO variants include real-time generation.

- Novel Loss Activities: Assessment objectives that target a specific behavioral model, such as reducing verbosity or improving mathematical reasoning.

Key Takeaways

- TRL v1.0 standardizes post-LLM training with an integrated CLI, maintenance system, and trainer workflow.

- Extraction separates the stable core from test methods such as ORPO and KTO.

- GRPO reduces the overhead of RL training by removing the separate critical model used in PPO.

- TRL combines PEFT, data stacking, and Unsloth to improve training efficiency and memory utilization.

- The library makes SFT, reward modeling, and alignment more productive for development teams.

Check it out Technical details. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Michal Sutter is a data science expert with a Master of Science in Data Science from the University of Padova. With a strong foundation in statistical analysis, machine learning, and data engineering, Michal excels at turning complex data sets into actionable insights.