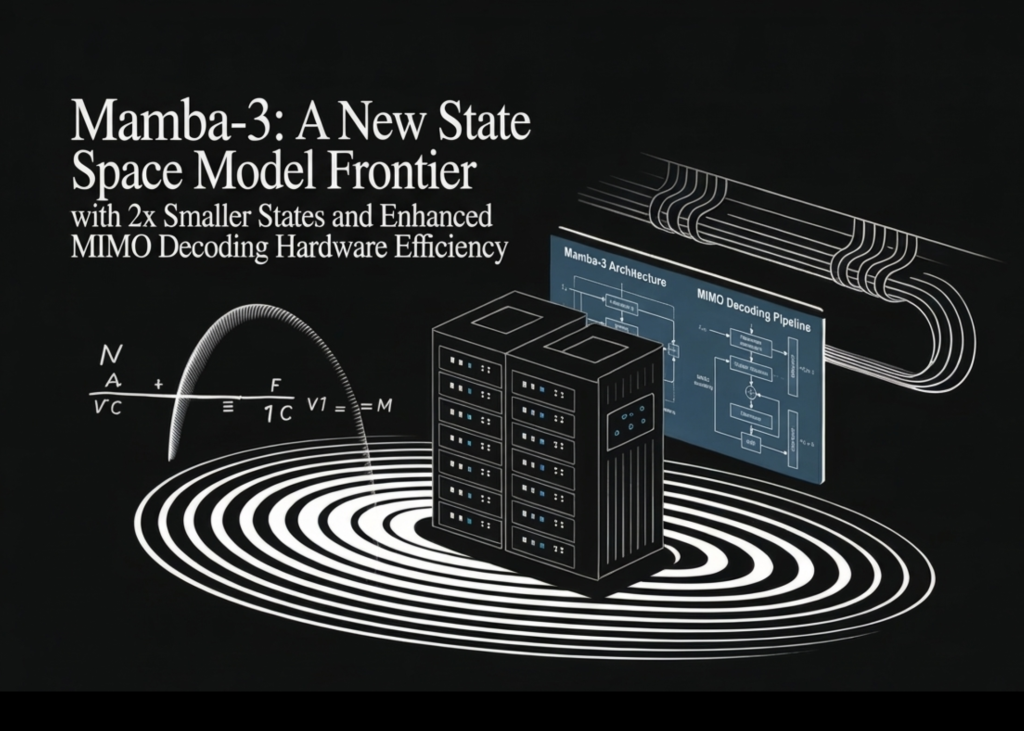

Meet Mamba-3: A New Space Model with 2 Sub-States and Improved MIMO Decoding Performance

Computational computation of thinking time has been a key driver of the Large Language Model (LLM) performance, shifting the focus from architecture to computational efficiency around model quality. Although Transformer-based architectures remain standard, their quadratic computational complexity and linear memory requirements create significant deployment barriers. A team of researchers from Carnegie Mellon University (CMU), Princeton University, Together AI, and Cartesia AI presented Mamba-3a model that addresses these issues with a ‘first-in-mind’ design.

Mamba-3 builds on the State Space Model (SSM) framework, introducing three methodological updates: exponential-trapezoidal discretization, complex value state updates, and Multi-Input Multi-Output (MIMO) design..

1. Exponential-Trapezoidal Discretization

State-space models are continuous-time systems that must be separated to analyze discrete sequences. Previous iterations such as Mamba-1 and Mamba-2 used a first-order heuristic known as ‘exponential-Euler’ discretization. Mamba-3 replaces this exponential-trapezoidal discretizationwhich provides an accurate second-order estimate of the state input priority.

Technically, this update changes different iterations from a two-time update to a three-time update:

$$h_{t}=e^{Delta_{t}A_{t}}h_{t-1}+(1-lambda_{t})Delta_{t}e^{Delta_{t}A_{t}}B_{t-1}x_{t-1}+lambda_{t}$x{t}$x}Delta_{t}

This formula is equivalent to applying a data-dependent, range-2 convolution to the input state B.txt within the basic iteration. In simulation experiments, this constant variable, combined with the readable biases of B and C, allows Mamba-3 to function effectively without the short-term fluctuations of the external cause that emergent models usually require.

2. Complex Precious Space Models and the ‘Thread Trick’‘

A limitation of real-valued linear models is their inability to solve ‘state tracking’ tasks, such as determining the consistency of a bit sequence. This failure comes from limiting the eigenvalues of the transformation matrix to real numbers, which cannot represent the ‘rotating’ forces required for such operations.

Mamba-3 includes SSMs have complex values to solve this. The research team established a theoretical equivalence between complex SSMs and real-valued SSMs using Data-dependent Rotary Positional Embeddings (RoPE). in projections B and C.

Using the ‘RoPE strategy,’ the model uses a data-dependent ensemble rotation for all time steps. This allows Mamba-3 to solve artificial functions such as Parity and Modular Arithmetic, where Mamba-2 and real-valued variables are no better than random guesses.

3. Multiple Input, Multiple Output (MIMO) architecture.

To address the hardware inefficiencies of memory-bound decoding, Mamba-3 switches from Single-Input-Single-Output (SISO) multiplexing to Multi-Input, Multi-Output (MIMO) structure.

For standard SSM encoding, the arithmetic intensity is about 2.5 ops per byte, well below the computing power of modern GPUs such as the H100. MIMO increases the rate R input and output (Bt E RNR again xt ERPR), to convert the state update from the outer product to matrix-matrix multiplication.

This change increases recording FLOPs up to 4x compared to Mamba-2 with fixed case size.. Because more computation is covered by the available I/O memory needed to update the state, MIMO improves modeling quality and confusion while maintaining the same clock code latency..

Architecture and Normalization

The Mamba-3 block follows the Llama style structure, alternating with SwiGLU blocks. Important fixes include:

- BC/QK Adaptation: RMS normalization is applied to B and C projections, mirroring QKNorm in Transformers. This stabilizes the training and allows the removal of the RMMSNorm post-gate used in previous versions.

- Direct Head Bias: A readable, channel-wise bias is added to the B and C components after normalization to obtain convolution-like behavior.

- Hybrid integration: When applied to hybrid architectures—linear layers that overlap each other—with the addition of a front gate, the clustered RMSNorm was found to improve length normalization in retrieval operations.

Results and Success

Tests were performed on the FineWeb-Edu dataset at all four model scales (180M to 1.5B).

- Downstream performance: At the 1.5B scale, the Mamba-3 SISO variant outperforms Mamba-2 and Gated DeltaNet (GDN). A variant of MIMO (R=4) also improves the average river accuracy by 1.2 points over the SISO baseline.

- Pareto Frontier: Mamba-3 achieves pre-training confusion comparable to Mamba-2 while using only half the state size (eg, Mamba-3 with state size 64 matches Mamba-2 with 128).

- Kernel Performance: Optimized Triton (for pre-filling) and CuTe DSL (for decoding) characters ensure that additional math components remain lightweight. SISO Mamba-3 cartridges exhibit lower latency than Mamba-2 and GDN-issued cartridges at standard BF16 settings.

| Model (1.5B) | Average. Downstream Acc % ↑ | FW-Edu Ppl ↓ |

| The Transformer | 55.4 | 10.51 |

| Mamba-2 | 55.7 | 10.47 |

| Mamba-3 SISO | 56.4 | 10.35 |

| Mamba-3 MIMO (R=4) | 57.6 | 10.24 |

Mamba-3 shows that basic refinement of the regional space model theory can bridge the gap between theoretical sub-quadratic efficiency and practical modeling ability.

Check it out Paper, GitHub page again Technical details. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.