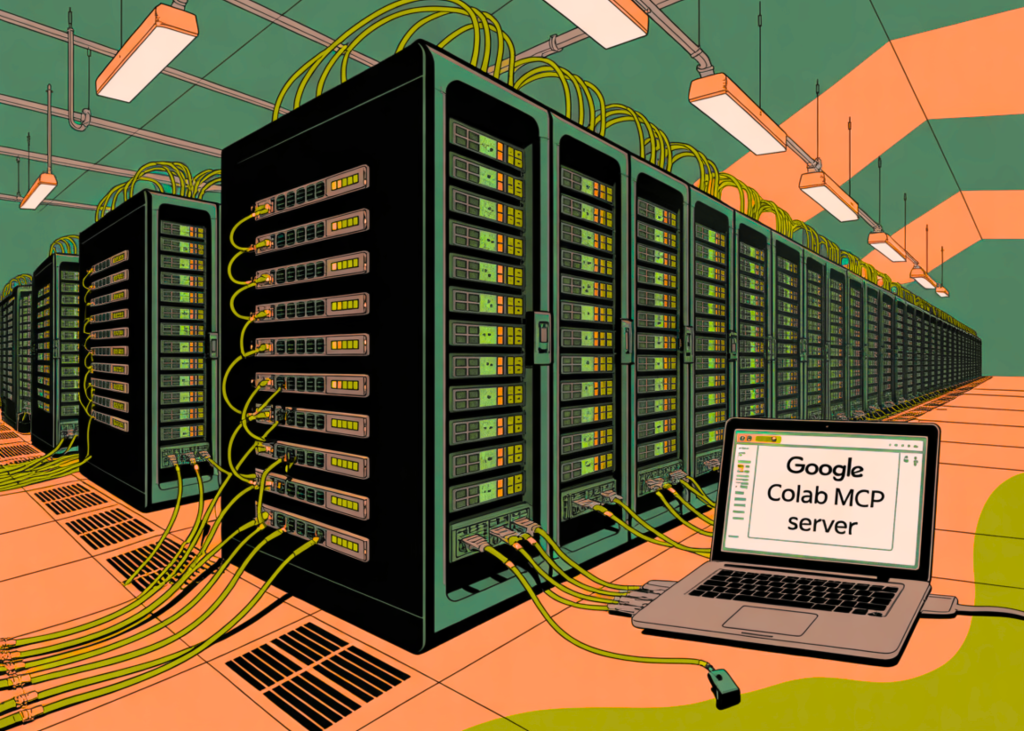

Google Colab Now Has an Open-Source MCP (Model Context Protocol) Server: Use Colab Runtimes and GPUs from any local AI agent

Google has officially released the Colab MCP Serverthe implementation of the Model Context Protocol (MCP) that allows AI agents to interact directly with the Google Colab environment. This integration goes beyond simple code generation by giving agents structured access to create, modify, and execute Python code within cloud-managed Jupyter notebooks.

This represents a transition from manual code execution to ‘agent’ orchestration. By adopting the MCP standard, Google allows any compatible AI client—including Anthropic’s Claude Code, Gemini CLI, or custom-built orchestration frameworks—to manage the Colab notebook as a remote runtime.

Understanding the Model Context Protocol (MCP)

The Model Context Protocol is an open standard designed to solve the ‘beast’ problem in AI development. Traditionally, AI modeling has been isolated from developer tools. To close this gap, developers had to write custom integrations for all tools or manually copy-paste data between the dialog interface and the IDE.

MCP provides a universal interface (usually using JSON-RPC) that allows ‘Clients’ (AI agent) to connect to ‘Servers’ (tool or data source). By releasing Colab’s MCP server, Google exposed the inner workings of its library as a standardized set of tools that LLM can ‘call’ automatically.

Technical Architecture: Local-to-Cloud Bridge

The Colab MCP server acts as a bridge. While the AI agent and the MCP server usually run locally on the developer’s machine, the actual computation takes place on Google Colab’s cloud infrastructure.

When a developer issues a command to an MCP-compatible agent, the workflow follows a specific technical path:

- Instruction: The user informs the agent (eg, ‘Parse this CSV and generate a regression structure’).

- Tool Selection: The agent indicates that it needs to use Colab MCP tools.

- API interactions: The server communicates with the Google Colab API to provide a runtime or open an existing one

.ipynbfile. - To do: The agent sends Python code to the server, which runs it in the Colab kernel.

- Status Response: Results (stdout, errors, or mixed media such as charts) are sent back through the MCP server to the agent, allowing for repeatable debugging.

Key Strengths of AI Devs

I colab-mcp implementation provides a specific set of tools that agents use to manage the environment. For devs, understanding these basics is essential for building custom workflows.

- Notebook Orchestration: Agents can use the

Notesbooka tool for generating a new environment from scratch. This includes the ability to edit a document using Markdown cells for text and Code cells for logic. - Real-Time Code Execution: By using the

execute_codetool, the agent can use Python snippets. Unlike a local terminal, this implementation takes place within the Colab environment, using Google’s backend computing and pre-configured deep learning libraries. - Dynamic Dependency Management: If the function requires a specific library such as

tensorflow-probabilityorplotlythe agent can sign according to the planpip installcommands. This allows the agent to configure its own environment based on task requirements. - Continuous Regional Management: Because the execution takes place in a notebook, the situation is persistent. An agent can define a variable in one step, check its value in the next, and use that value to inform subsequent logic.

Setup and use

The server is available with googlecolab/colab-mcp a warehouse. Developers can use the server using uvx or npxwhich handles MCP server execution as a background process.

For devs using Claude Code or other CLI-based agents, configuration usually involves adding the Colab server to config.json file. Once connected, the agent’s ‘system information’ is updated with Colab’s local capabilities, allowing it to think about when and how cloud runtime is being used.

Check it out Repo again Technical details. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.