Why QA Teams Should Lead the Shift

Artificial Intelligence is no longer a futuristic concept or experimental force. By 2026, AI has entrenched itself in core business operations—powering decisions about hiring, finance, health, customer experience and more.

This change brings an important change: AI risk is now a business risk.

For Quality Engineering teams, especially QA leaders, this marks a turning point. The role evolves from performance assurance to assurance trust, security, and compatibility with smart systems.

From Evaluation to Emphasis

For years, organizations approached AI with curiosity—running pilots, proofs of concept, and isolated use cases. That phase is over.

2026 is the year The AI rule is applied continuously in all regions. Frameworks such as the EU AI Act and evolving global compliance standards now require organizations to demonstrate:

- Clear the hierarchy

- Risk assessment methods

- AI decision research

- Continuous monitoring programs

It is not enough to say “We use AI responsibly.”

Organizations must now prove it—with evidence.

AI Governance is Now Business Governance

One of the biggest shifts in thinking is that AI dominance will not exist in isolation.

It should be closely integrated with:

- Business Risk Management

- Cybersecurity frameworks

- Data management policies

- Vendor and third party risk systems

AI systems interact with multiple layers of business architecture, making governance a opposite responsibility.

For QA teams, this means working closely with:

- Security teams

- Teams of data engineers

- Compliance and legal departments

Testing isn’t just about apps anymore—it’s starting now all ecosystems.

Understanding the New Dimensions of AI Risk

Unlike traditional software, AI presents complex and multifaceted risks:

- Issues of bias and fairness → that affect real-world decisions

- Data privacy risks → due to the use of big data

- Delusional models and hallucinations → leading to wrong output

- Non-compliance → exposing organizations to sanctions

Each of these risks requires different verification strategies.

Traditional test cases alone are not enough.

QA must evolve to:

- Behavioral assessment

- Ethical verification

- Risk-based assessment methods

Compliance Now In Progress

Compliance in the AI era is not a one-time certification exercise—it’s an ongoing process.

Organizations are expected to use:

- Continuous monitoring of AI systems

- Regular risk assessment

- Lifecycle management from training to deployment

- Real-time anomaly detection

This introduces a new paradigm:

“Compliance as a sustainable engineering practice.”

QA teams are uniquely positioned to implement these automation, visualization, and validation pipelines.

Auditability and Traceabilityility It is mandatory

One of the strongest requirements in 2026 readability.

Organizations should be able to answer:

- Why did the AI make this decision?

- What data is used?

- What version of the model was used?

- What controls were in place?

This requires:

- Detailed documentation

- Access and traceability methods

- Obvious model behavior

In QA, this translates into validating not only the output—but also resolution specification and log tracking.

Privacy by Design is No Longer an Option

Privacy is now a basic requirement for AI systems.

It should be embedded across:

- Data collection pipelines

- Exemplary training procedures

- Posting of buildings

“Privacy by design” ensures that systems are compliant by mistakenot as an afterthought.

QA teams must ensure:

- Data reduction procedures

- Permission management

- Data hiding and anonymization

The Cost of Doing It Right

The consequences of AI mismanagement are no longer theoretical.

Organizations are now facing:

- Regulatory sanctions

- Financial loss

- Damage to reputation

- Loss of customer trust

As AI systems become more autonomous, the risks grow rapidly—and so does the impact.

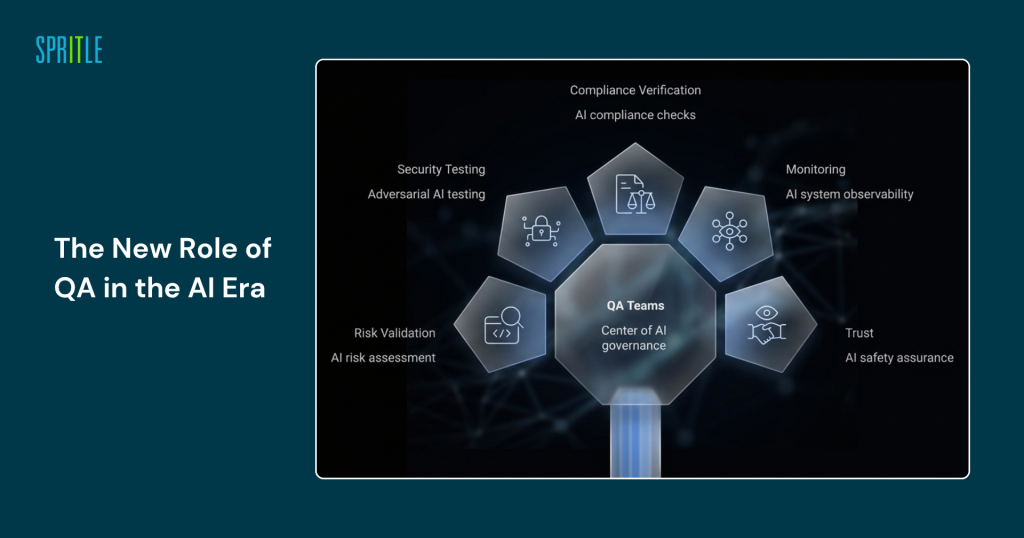

The New Role of QA in the Age of AI

This change puts QA teams at the center of AI governance.

The role expands to include:

- AI risk validation

- Security and surveillance of enemies

- Ensuring compliance

- Monitoring and visibility

- Trust and security assurance

Essentially, QA evolves into a responsible AI guardian.

Final thoughts

AI in 2026 isn’t just about innovation—it’s about accountability.

The organizations that succeed won’t be the ones that adopt AI the fastest, but the ones that embrace it safely, responsibly, and transparently.

For QA leaders, this is an opportunity to go beyond traditional boundaries and play a strategic role in shaping the future of technology.

Because in the AI era,

quality is no longer just about accuracy—it’s about Trust.