AI to help researchers see the big picture in cell biology | MIT News

Studying gene expression in cancer patient cells can help clinical biologists understand the origins of cancer and predict the success of different treatments. But cells are complex and contain many layers, so the way a biologist makes measurements affects what data they can get. For example, measuring proteins in a cell can yield different information about cancer outcomes than measuring gene expression or cell morphology.

There is still information from things. But to capture complete information about a cell’s state, scientists often must make multiple measurements using different techniques and analyze them one at a time. Machine learning methods can speed up the process, but existing methods lump all the information from each measurement method together, making it difficult to figure out which data comes from which part of the cell.

To overcome this problem, researchers at the Broad Institute of MIT and Harvard and the ETH Zurich/Paul Scherrer Institute (PSI) developed an artificial intelligence-driven framework that learns what information about cell state is shared across measurement methods and what information is unique to a particular measurement type.

By identifying which information comes from which parts of the cell, this method provides a complete picture of the state of the cell, making it easier for the biologist to see a complete picture of the cell’s interactions. This can help scientists understand disease mechanisms and track the progression of cancer, neurodegenerative disorders such as Alzheimer’s, and metabolic diseases such as diabetes.

“When we study cells, one measurement is often not enough, so scientists develop new technologies to measure various aspects of cells. Although we have many ways to look at the cell, at the end of the day we have a single cell state. By combining information from all these measurement methods together in a smart way, we can have a complete picture of the state of the cell. and Harvard, now a team leader at AITHYRA in Vienna, Austria.

Zhang is co-authored in a paper on the work of GV Shivashankar, professor in the Department of Health Sciences and Technology at ETH Zurich and head of the Multiscale Bioimaging Laboratory at PSI; and senior author Caroline Uhler, a professor in the EECS and the Institute for Data, Systems, and Society (IDSS) at MIT, a member of MIT’s Laboratory for Information and Decision Systems (LIDS), and director of the Eric and Wendy Schmidt Center at the Broad Institute. The study appears today on Nature Computational Science.

Changing multiple dimensions

There are many tools scientists can use to capture information about the state of a cell. For example, they can measure RNA to see if a cell is growing, or they can measure chromatin morphology to see if a cell is interacting with external physical or chemical signals.

“When scientists do a multimodal analysis, they collect information using many measurement methods and combine them to better understand the situation under the cell. Some information is captured by only one method, while other information is shared by all methods. To fully understand what is happening inside the cell, it is important to know where the information comes from,” said Shivashankar.

Usually, for scientists, the only way to solve this is to do many individual experiments and compare the results. This slow and cumbersome process limits the amount of information they can gather.

In the new work, the researchers developed a machine learning framework that specifically understands what information overlaps between different methods, and what information is unique to one method but not captured by others.

“As a user, you can just enter your mobile data and it automatically tells you what data is shared and what data is specific to a particular method,” Zhang said.

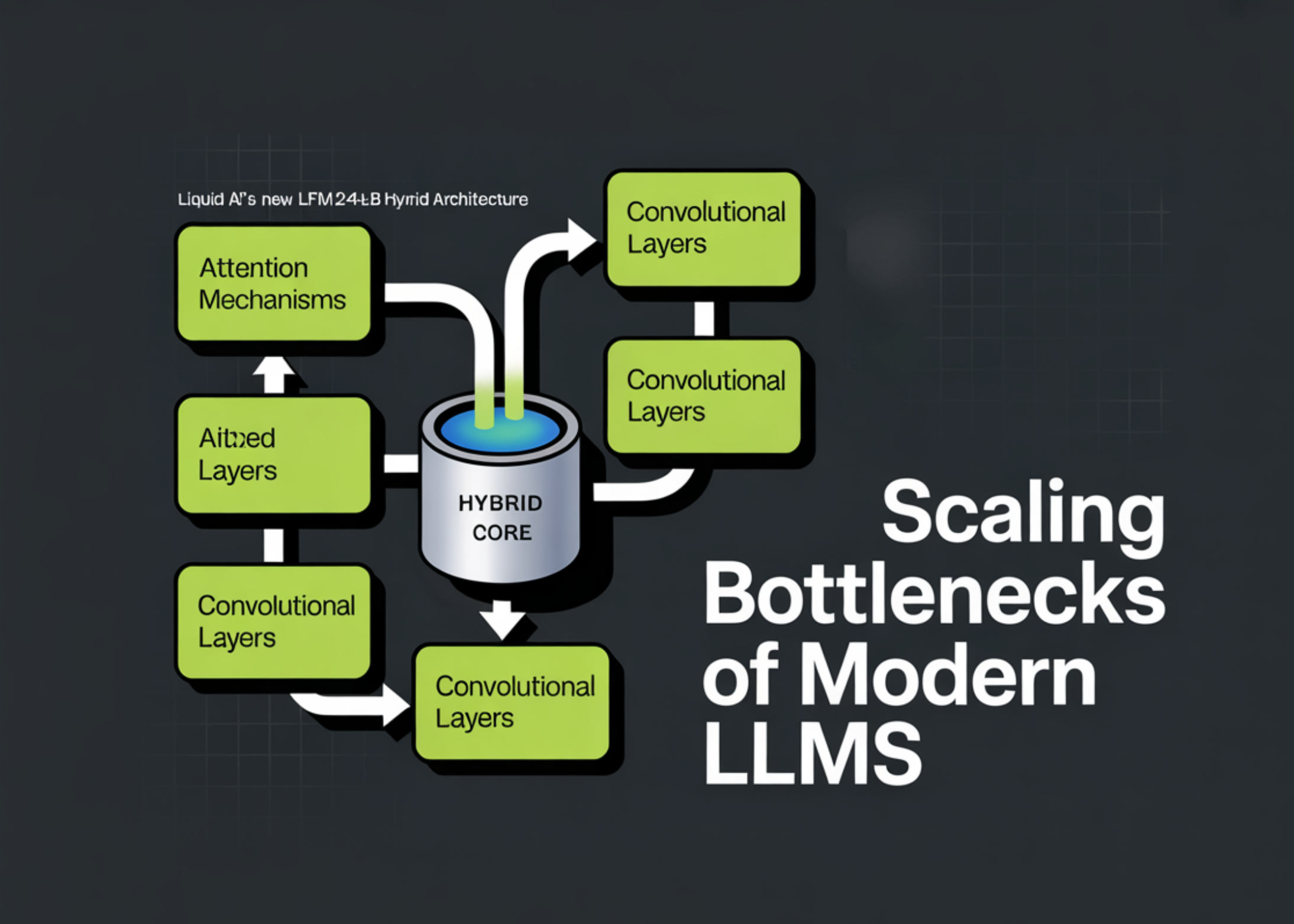

To build this framework, the researchers also conceptualized general machine learning models designed to capture and interpret various cellular measurements.

Typically these methods, known as autoencoders, have one model for each measurement method, and each model writes a different representation of the data captured by that method. The representation is a compressed version of the input data that discards any irrelevant information.

The MIT method has a shared representation space where data that overlaps between multiple modes is coded, and separate spaces where unique data from each mode is coded.

Basically, one can think of it as a Venn diagram of mobile data.

The researchers also used a special, two-step training process that helps their model handle the complexity involved in determining which data is shared across multiple data paths. After training, the model can identify which data is shared and which is different if it is fed cell data it has never seen before.

Data classification

In the evaluation of synthetic data sets, the framework well captured shared knowledge and specific processes. When they apply their method to a real-world single-cell dataset, it completely and automatically distinguishes between gene activity captured by two quantitative methods, such as transcriptomics and chromatin accessibility, while correctly identifying which information comes from one of those methods.

In addition, the researchers used their method to identify which measurement method captured a specific protein marker that indicates DNA damage in cancer patients. Knowing where this information comes from can help a clinical scientist decide which method to use to measure that marker.

“There are many methods in the stock and we cannot measure them all, so we need a predictive tool. But then the question is: Which methods should we measure and which methods should we predict? Our method can answer that question,” said Uhler.

In the future, the researchers want to enable the model to provide more interpretable information about the state of the cell. They also want to do more tests to make sure it correctly classifies mobile information and applies the model to a wider range of clinical questions.

“It’s not enough to just combine data from all these methods,” Uhler said. “We can learn a lot about the state of the cell if we carefully compare different approaches to understand how different parts of the cell regulate each other.”

This research was funded, in part, by the Eric and Wendy Schmidt Center at the Broad Institute, the Swiss National Science Foundation, the US National Institutes of Health, the US Office of Naval Research, AstraZeneca, the MIT-IBM Watson AI Lab, the MIT J-Clinic for Machine Learning and Health, and a Simons Investigator Award.