Yann LeCun’s new AI paper Disproves AGI is Misdefined and Introduces Dynamic Adaptive Intelligence (SAI) Instead.

What if the AI industry is preparing for a goal that cannot be clearly defined or reliably measured? That’s the main argument of a new paper by Yann LeCun, and his team, that Artificial General Intelligence has become an overused term used inconsistently across academia and industry. The research team argued that because AGI does not have a stable operational definition, it has become a weak scientific indicator for evaluating progress or guiding research.

Why Human Wisdom Isn’t Really ‘Normal’‘

The research team in the paper begins by challenging a common assumption behind many discussions of AGI: that human intelligence is a reasonable representation of ‘general’ intelligence. The research team argues that humans appear normal only because we examine the intelligence that comes from within the distribution of work found in human biology and life. We are good at the types of tasks that have been important in our lives, such as perception, motor control, planning, and social thinking. But outside of that range, human ability is limited, and in many cases machines already surpass us. The point of the research paper is not that people are small in any sense, but that human intelligence is best understood as special and flexible rather than general in any universal sense.

The Problem with Human-Centered Definitions of AGI

That distinction is important because many definitions of AGI quietly benefit from a human-centered benchmark. The research team says there is no real consensus on what AGI means across academia or industry. Other definitions focus on doing all one can do. Others focus on economic benefits, a broad understanding of work, open-mindedness, or the ability to learn. These are not the same definitions, and they do not produce pure test targets. The research team therefore argues that the existing definitions of AGI are inadequate because they are often vague, difficult to test, or indeed rare when tested.

Switching from AGI to SAI

Another way of research paper is Superhuman Adaptable Intelligenceor SAI. It defines SAI as an intelligence that can adapt and surpass humans in any task humans can perform, while also adapting to useful tasks outside the human domain. That’s a subtle but important change. Instead of asking whether the system already matches humans on a fixed checklist of tasks, the research team asks how quickly the system can learn something new and how long it can continue to adapt. In this framework, the key metric is the speed of adaptation: the speed with which the agent acquires new skills and learns new tasks.

Why Adaptation Speed Is More Important Than Hard Benchmarks

This reframes the problem in an engineering manner. A benchmark based on a growing catalog of tasks quickly becomes negative; the area of possible skills is not effectively limited. The research team argued that assessing intelligence as a fixed set of skills is a misnomer. What matters most is whether the system can adapt quickly when it encounters a new background, a new target, or a new environment. That’s why the research paper treats flexibility, rather than generality, as a better North Star.

Technology as a Feature, Not a Failure

The second major claim of the research paper is that AI progress should not be framed as a march towards a single universal model that does everything equally. The research team argued that specialization is not an intellectual weakness but a practical path to high performance. People themselves are not an example; they are part of the evidence. The research paper suggests that future AI systems will likely require internal expertise, governance, and diversity across models and methods rather than a single monolithic system. In plain words, the research paper says that a single model should not be expected to handle all domains equally effectively because current marketing language favors the term ‘general.’

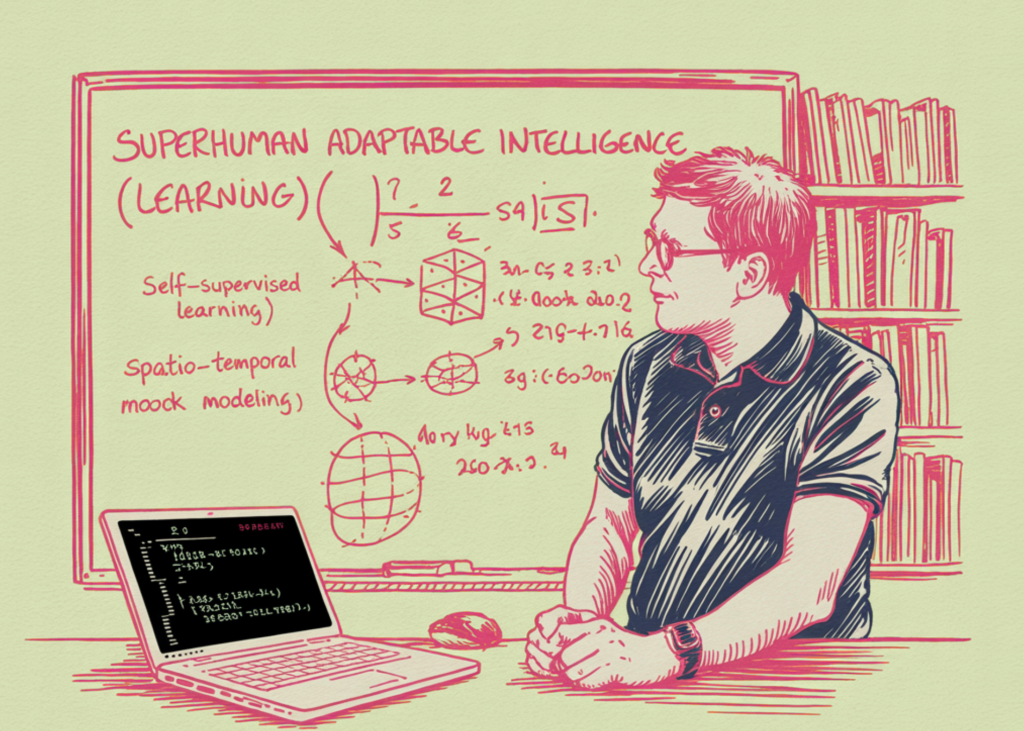

Why Research Papers Point to Supervised Learning?

From there, the research paper links SAI to self-monitored learning. The logic is straightforward. If the goal is to quickly adapt to a very large area of work, then relying solely on supervised learning becomes limited because supervised methods require access to large, reliable labeled datasets. In real situations, that assumption often fails. The research team says that self-supervised learning is a promising approach because it can apply structure to raw data and has already provided powerful results across domains. Importantly, they do not imply that SAI requires one specific architecture. They present supervised learning as a promising approach, not the final architectural answer.

Global Models and Limits to High-Level Prediction

The research paper also says that strong adaptation may benefit from it world models. Here the research team departs from the idea that token or pixel level prediction alone is sufficient for robust intelligence in the virtual world. They argue that what is important is learning the collective representations that capture the dynamics of the system. In that view, the global model supports simulation and editing, which in turn supports zero-shot and few-shot adaptation. The research paper points to latent forecasting structures such as JEPA, Dreamer 4, and Genie 2 as examples of the type of approach the industry should explore, while also stating that SAI does not define a single structure.

A warning against Architectural Monoculture

The research team also criticizes the current level of homogeneity of architecture in advanced AI. They note that automated LLMs and LMMs dominate the ‘mainstream’ AI space in part because shared tools and benchmarks create momentum. But the research paper says this concentration narrows the search space and can slow progress. It goes on to say that autoregressive systems have well-known weaknesses, including the accumulation of errors over long distances, which smooth out long-horizon interactions. Their broader point is not that the current big models don’t work. It is because the field must avoid treating a single successful paradigm as the ultimate intellectual template.

Key Takeaways

- The research paper says that AGI is not an exact scientific objective: According to the research team, AGI is used inconsistently across academia and industry, making it difficult to define, measure, or use a stable research goal.

- Human intelligence should not be taken as a definition of ‘normal’ intelligence: The research paper says that people seem to be normal only within the sphere of activity created by biology and life, but outside that sphere, human potential is limited.

- The research team suggests Superhuman Adaptable Intelligence (SAI) as a better target: SAI is defined by the ability to adapt to situations beyond human control in human activities and to learn useful activities outside the human domain.

- Adaptation speed is more important than static benchmark range: Instead of asking whether a system can already multitask, the research paper focuses on how quickly it can acquire new skills and adapt to new situations.

- The research paper favors technology, supervised learning, and global models over a single monolithic approach to intelligence.: The research team argued that future AI systems will likely require in-house expertise and robust world modeling, rather than assuming that a single universal architecture will solve everything.

Check it out Paper. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.