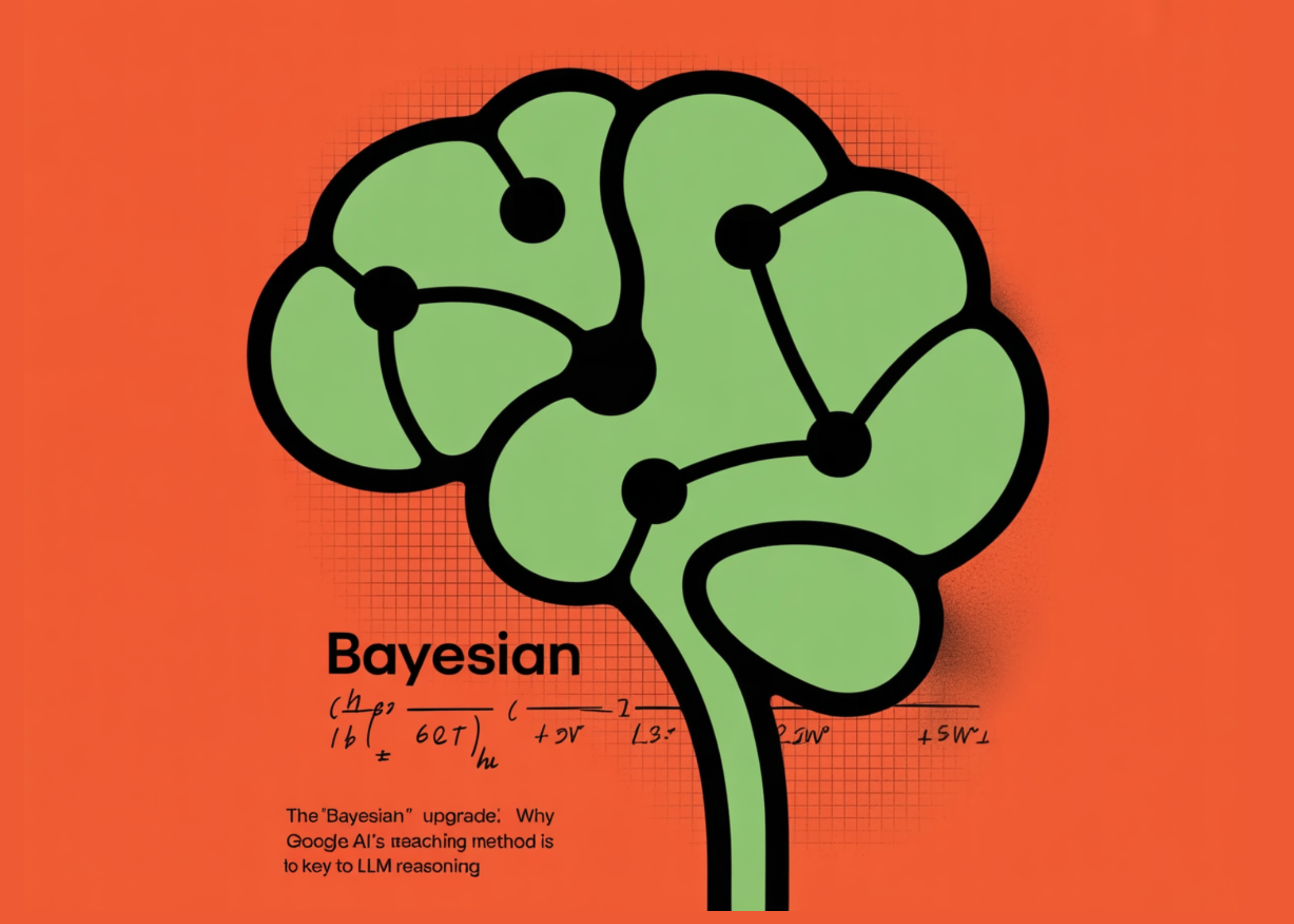

‘Bayesian’ Development: Why Google’s New AI Tutorial Is Key to Discussing LLM

Large Linguistic Models (LLMs) are the world’s leading actors, but when it comes to cold, hard thinking to revise beliefs based on new evidence, they are surprisingly stubborn. A team of researchers from Google say the current crop of AI agents fall far short of ‘possible thinking’—the ability to maintain and update a ‘model of the world’ as new information comes in.

The solution? Stop trying to give them the right answers and start teaching them to guess like a mathematician.

Problem: The ‘One-and-Done’ Plateau

While LLMs like the Gemini-1.5 Pro and GPT-4.1 Mini can write code or digest emails, they struggle as interactive agents. Think of a flight booking assistant: you need to consider your preferences (price vs. duration) by looking at which flights you choose in several rounds.

The research team found that off-the-shelf LLMs—including heavy metals such as Llama-3-70B and Qwen-2.5-32B—showed ‘little or no improvement’ after the first cycle of interactions. Although the ‘Bayesian Assistant’ (a symbolic model that uses Bayes’ rule) gets more accurate with all data points, standard LLMs quickly increase, failing to adjust their internal ‘beliefs’ to the user’s specific reward function.

Meet Bayesian Teaching

The research team introduced a process called Bayesian teaching. Instead of properly fitting the model to the ‘correct’ data (which they call the Oracle Instructor), fine tune it to mimic a A Bayesian assistant-a model that implicitly uses Bayes’ rule to update probability distributions over possible user preferences.

Here is the technical breakdown:

- Work: Interaction of five circular flight recommendations. Flights are defined by factors such as price, duration, and stops.

- Reward Work: A vector representing user preferences (eg, strong preference for low values).

- Later update: After each cycle, the Bayesian Assistant updates behind distribution based on before (first impressions) and opportunities (the probability that a user would choose a certain flight given a certain reward function).

By using Supervised Fine-Tuning (SFT) in this Bayesian interaction, the research team forced the LLMs to accept process of deliberation under uncertainty, not just the final outcome.

Why ‘Educated Guessers’ Hit the Right Answers

The most controversial finding of the study is that Bayesian teaching work continuously Oracle tutorial.

In ‘Oracle Teaching,’ the model is trained on a teacher who already knows exactly what the user wants. In ‘Bayesian teaching,’ the teacher often which is wrong in the first rounds because he is still learning. However, those ‘educated guesses’ provide a much stronger learning signal. By watching the Bayesian Assistant struggle with uncertainty and then update its beliefs after receiving feedback, the LLM learns the ‘skill’ of updating beliefs.

The results were clear: Bayesian-tuned models (such as Gemma-2-9B or Llama-3-8B) were not only more accurate but agreed with the Bayesian ‘golden strategy’ about 80% of the time—far superior to their original versions.

Generalization: Beyond Flights to Online Shopping

For devs, the ‘holy grail’ is routine. A model trained on flight data doesn’t just have to be good at flying; it should be straight the idea learning from the user.

The research team tested their fine-tuned models on:

- Complex Growth: Moving from four flight characteristics to eight.

- New Domains: Hotel recommendations.

- Real World Situations: A web shopping activity using real products (titles and descriptions) from a simulated site.

Even though the models were only fine-tuned to artificial flight data, they successfully transferred those predictive capabilities to hotel bookings and web shopping.. In fact, Bayesian LLMs even outperform human participants in some circles, as humans tend to deviate from normal reasoning standards due to bias or apathy..

The Neuro-Symbolic Bridge

This study highlights a unique strength of deep learning: the ability to integrate a classical, symbolic model (Bayesian Assistant) into a neural network (LLM).

Although symbolic models are suitable for simple, paid tasks, they are notoriously difficult to create for ‘dirty’ real-world domains such as web shopping. By teaching LLM in imitate symbolic modeling strategy, it is possible to get the best of both worlds: the rigorous Bayesian reasoning and the flexible, natural language understanding of the translator.

Key Takeaways

- LLMs Strive for Renewal of Beliefs: Off-the-shelf LLMs, including modern models like the Gemini-1.5 Pro and GPT-4.1 Mini, fail to update their beliefs effectively as they acquire new information, with performance often increasing after a single interaction.

- Bayesian Learning Overrides Direct Training: Teaching an LLM to simulate the ‘learned guess’ and uncertainty of a traditional Bayesian model is more effective than directly training it on the correct answers (oracle teaching).

- Skills That Can Be Seen Cover All Domains: LLMs fine-tuned to simple synthetic tasks (eg, airline recommendations) can successfully transfer their belief-renewal skills to complex, real-world situations such as web shopping and hotel recommendations.

- More Robust Neural Models of Human Voice: While the symbolic Bayesian model is good for users with consistent characters, fine-tuned LLMs show greater robustness when interacting with people, whose choices often deviate from their preferences due to noise or bias.

- The Effective Distillation of Image Techniques: Research proves that LLMs can learn to approximate complex reasoning techniques through well-supervised planning, allowing them to apply these techniques to domains that are too messy or too complex to be clearly organized in a classical representational model.

Check it out Paper again Technical details. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.